Language Models - The Basics

How does a machine learn human language?

- What is a language model?

- A brief history

- Text Preprocessing

- Vocabulary

- Lexical Semantics

- Vector Semantics

- Sequence Labeling

What is a language model?

The classic definition of a language model (LM) is a probability distribution over sequences of tokens. Suppose we have a vocabulary $\mathcal{V}$ of a set of tokens. A language model $p$ assigns each sequence of tokens $x_{1},…,x_{L}$ a probability: $p(x_{1},…,x_{L})$

The probability basically tells us how “good” a sequence of tokens is. For example, if the vocabulary is $\mathcal{V} = {ate,the,deer,grass,the}, the language model might assign (demo):

\[\begin{align*} p(\text{the,deer,ate,the,gass}) &=& 0.1 \\ p(\text{ate,the,deer,grass,the}) &=& 0.08 \\ p(\text{the,the,deer,grass,ate}) &=& 0.001 \end{align*}\]Language models are mathematically simple yet deceptive in their complexity. They require exceptional linguistic and world knowledge to assign meaningful probabilities to all sequences. It assigns low probability to ungrammatical sentences and higher probability to semantically plausible ones based on linguistic and world knowledge.

Generation:. As defined, a language model $p$ takes a sequence and returns a probability to assess its goodness. We can also generate a sequence given a language model. The simplest way to do this is to sample a sequence $x_{1:L}$ from the language model $p$ with probability equal to $p(x_{1:L})$. Efficient computational methods for language modeling depend on the form of the language model $p$. Sampling directly from a language model is not common due to limitations and the desire to obtain the best sequence rather than an average one.

Autoregressive Language Models

We can use the chain rule of probability to compute the joint probability distribution $p(x_{1:L})$ for a sequence $x_{1:L}$.

\[\begin{equation} p(x_{1:L}) = p(x_1)p(x_2|x_1)p(x_3|x_2,x_1)\ldots p(x_L|x_{1:L-1}) = \prod_{1}^{L}p(x_i|x_{1:i-1}) \end{equation}\]For example,

\[\begin{align*} p(\text{the,deer,ate,the,gass}) = &p(\text{the})& \\ &p(\text{deer}|\text{the})& \\ &p(\text{ate}|\text{the,deer})& \\ &p(\text{the}|\text{the,deer,ate})& \\ &p(\text{grass}|\text{the,deer,ate,the})& \\ \end{align*}\]An autoregressive language model is one where each conditional distribution $p(x_i|x_{1:i-1})$ can be computed efficiently.

Generation:. Now to generate an entire sequence $x_{1:L}$ from an autoregressive language model $p$, we sample one token at a time given the tokens generated so far:

\[\begin{align*} \text{for} \ \ i = &1,\ldots,L:& \\ x_i \sim &p(x_i|x_{1:i-1})^{1/T}& \end{align*}\]where T$\geq$ 0 is a temperature parameter that controls how much randomness we want from the language model:

- T=0: deterministically choose the most probable token x$_i$ at each position i.

- T=1: sample “normally” from the pure language model.

- T=$\infty$: sample from a uniform distribution over the entire vocabulary $\mathcal{V}$

However, once the probabilities are raised to the power of 1/T, we need to re-normalize the distribution to sum it back to 1. The normalized version \(p_{T}(x_i\|x_{1:i-1}) \propto p(x_i\|x_{1:i-1})^{1/T}\) is called the annealed conditional probability distribution.

Conditional generation:. More generally, we can perform conditional generation by specifying some prefix sequence $x_{1:i}$ (called a prompt) and sampling the rest $x_{i+1:L}$ (called the completion). Conditional generation unlocks the ability for language models to solve a variety of tasks by simply changing the prompt (sounds familiar??).

A brief history

Language models date back to Claude Shannon, when he introduced the entropy of a distribution as

The entropy measures the expected number of bits any algorithm needs to encode a sample $x \sim p$ into a bitstring.

- The lower the entropy, the more “structured” the sequence is, and the shorter the code length.

- $\log\frac{1}{p(x)}$ is the length of the code used to represent an element x that occurs with probability p(x).

Shannon’s particular interest was on quantifying the entropy of the English language by analyzing its letter sequences. In order to do this, he made the assumption that there exists a “true” probability distribution, denoted as p, that generates English text samples, represented as $x \sim p$. While the existence of this true distribution may be debatable, it is still a valuable mathematical concept that can aid in understanding the structure of the English language.

Shannon also defined the cross-entropy:

\[\begin{equation} H(p,q) = \sum_{x} p(x) \log\frac{1}{q(x)} \end{equation}\]which measures the expected number of bits (nats) needed to encode a sample $x \sim p$ using the compression scheme given by the model $q$ (representing x with a code of length $\frac{1}{q(x))}. The cross-entropy H(p,q) upper bounds the entropy H(p),

\[H(p,q) \geq H(p)\]which means that we can estimate H(p,q) by constructing a (language) model q with only samples from the true data distribution p, whereas H(p) is generally inaccessible if p is English. So we can get better estimates of the entropy H(p) by constructing better models q, as measured by H(p,q).

The Shannon Game

The Shannon Game is a word-guessing game that uses yes-or-no questions to maximize the amount of information gained with each answer. For example, player A chooses the word “pizza”. Player B asks the first question, “Is the word a type of food?” and A answers “yes”. B then asks, “Is the food typically eaten hot?” and A answers “yes” again. B narrows down the possibilities further with questions like “Is the food a type of sandwich?” and “Is the food a type of pasta dish?” until they guess the word “pizza”.

N-gram models

In an n-gram model, the prediction of a token $x_i$ only depends on the last n−1 characters $x_{i−(n−1):i−1}$ rather than the full history (also known as the Markov assumption

These probabilities are computed based on the number of times various n-grams occur in a large corpus of text, and appropriately smoothed to avoid overfitting. Fitting n-gram models to data is extremely computationally cheap and scalable. If n is too small, then the model will be incapable of capturing long-range dependencies, however, if n is too big, it will be statistically infeasible to get good estimates of the probabilities.

As a result, these language models were limited to tasks such as speech recognition and machine translation where the acoustic signal or source text provided enough information that only capturing local dependencies wasn’t a huge problem. Let’s use the “The Time Machine” by H. G. Wells as the dataset to explore the a few fetures of the n-gram models. The 10 most frequent words include:

vocab.token_freqs[:10]

[('the', 2261),

('i', 1267),

('and', 1245),

('of', 1155),

('a', 816),

('to', 695),

('was', 552),

('in', 541),

('that', 443),

('my', 440)]

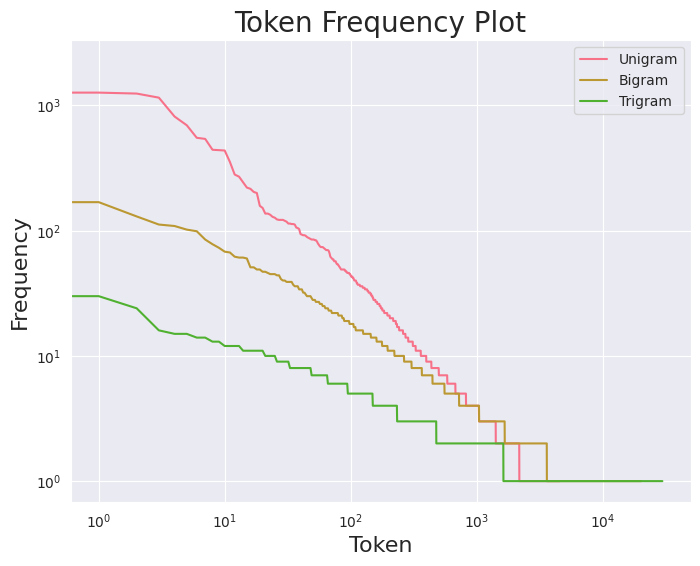

First, the word frequency decays rapidly in a well-defined way. After dealing with the first few words as exceptions (they are what we call stop words), all the remaining words roughly follow a straight line on a log-log plot. This means that words satisfy Zipf’s law, which states that the frequency

\[\begin{equation} \log n_i = -\log i + c \end{equation}\]where \(n_i\) is the frequency of the i\(^{th}\) word, where \(\alpha\) is the exponent that characterizes the distribution and $c$ is a constant. \(\alpha\) decreases with higher n in n-gram models, depending on the sequence length.

Second, the number of distinct n-grams is not that large. This suggests that there is quite a lot of structure in language (which is a good thing!).

Text Preprocessing

Text preprocessing is the process of transforming raw text data into a format that is suitable for analysis by a machine learning algorithm or other natural language processing (NLP) techniques. I will go through the major preprocessing steps although it varies with the type of dataset and ML algorithm to be used.

Tokenization

Tokenization is the process of breaking down a text into smaller units called tokens. These tokens can be words, phrases, or even individual characters depending on the task at hand. The goal of tokenization is to create a representation of the text that is easy to work with and analyze. A simple tokenize function for the “The Time Machine” dataset is shown below.

def tokenize(lines, token='char'): #@save

"""Split text lines into word or character tokens."""

if token == 'word':

return [line.split() for line in lines]

elif token == 'char':

return [list(line) for line in lines]

else:

print('ERROR: unknown token type: ' + token)

tokens = tokenize(lines, token='word')

for i in range(10,15):

print(tokens[i])

['twinkled', 'and', 'his', 'usually', 'pale', 'face', 'was', 'flushed', 'and', 'animated', 'the']

['fire', 'burned', 'brightly', 'and', 'the', 'soft', 'radiance', 'of', 'the', 'incandescent']

['lights', 'in', 'the', 'lilies', 'of', 'silver', 'caught', 'the', 'bubbles', 'that', 'flashed', 'and']

['passed', 'in', 'our', 'glasses', 'our', 'chairs', 'being', 'his', 'patents', 'embraced', 'and']

['caressed', 'us', 'rather', 'than', 'submitted', 'to', 'be', 'sat', 'upon', 'and', 'there', 'was', 'that']

Tokenization is not always a simple process because determining the boundaries of words can be difficult, especially in symbol-based languages like Chinese, Japanese, Korean, and Thai. Other challenges include handling symbols, such as “$100,” and contractions, like “you’re” and “I’m,” that can significantly change the meaning of a word.

One commonly used tokenization standard is known as the Penn Treebank tokenization standard, used for the parsed corpora (treebanks) released by the Linguistic Data Consortium (LDC), the source of many useful datasets. This standard separates out clitics (doesn’t becomes does plus n’t), keeps hyphenated words together, and separates out all punctuation.

Instead of using predefined word or character tokens, modern tokenizers automatically identify token sets, including subwords, which can be arbitrary substrings or morphemes. This approach helps handle unknown words by representing them with known subword units or individual letters, improving language processing.

Tokenization schemes usually consist of two parts: a token learner and a token segmenter. The learner generates a vocabulary, a set of tokens, from a raw training corpus, while the segmenter breaks down raw test sentences into the tokens in the vocabulary. The most commonly used algorithms are byte-pair encoding

Byte-Pair Encoding

In BPE, the algorithm starts by dividing the input text into individual characters or tokens. It then iteratively merges the most frequent pair of adjacent tokens until a desired vocabulary size is reached. For example, given the input text “banana”, the algorithm might start by dividing it into individual characters: “b”, “a”, “n”, “a”, “n”, “a”. It would then count the frequency of each pair of adjacent tokens and merge the most frequent pair, replacing it with a new token. In this case, the most frequent pair is “na”, which appears twice. The algorithm would merge “na” into a new token, replacing the two occurrences in the original text with the new token “x”. The text would then become “b”, “a”, “x”, “x”.

WordPiece

WordPiece initializes the vocabulary with all characters in the training data and learns merge rules that maximize likelihood once added to the vocabulary, instead of choosing the most frequent symbol pair like BPE.

Unigram

In contrast to BPE or WordPiece, Unigram initializes its base vocabulary to a large number of symbols and progressively trims down each symbol to obtain a smaller vocabulary. The Unigram algorithm computes a loss over training data based on current vocabulary and language model, and removes a percentage of symbols with the lowest loss increase until the vocabulary reaches the desired size.

Text Normalization

Word normalization is the task of putting words/tokens in a standard format, choosing a single normal form for words with multiple forms like USA and US or converting all characters to lowercase (also called case folding). The standardization may be valuable, despite the spelling information that is lost in the normalization process.

Lemmatization

Lemmatization is the task of determining that two words have the same root, despite their surface differences. The words dinner and dinners both have the lemma dinner. The most sophisticated methods for lemmatization involve complete morphological parsing of the word. Morphology is the study of the way words are built up from smaller meaning-bearing units called morphemes. Two broad classes of morphemes can be distinguished: stems—the central morpheme of the word, supplying the main meaning—and affixes—adding “additional” meanings of various kinds.

Vocabulary

To allow models that take numerical inputs to use string tokens, we build a dictionary or vocabulary that maps each unique token to a numerical index starting from 0. We count the unique tokens in the corpus, assign a numerical index based on their frequency, and remove rarely appearing tokens. Any token not in the corpus or removed is mapped to a special unknown token $\texttt{< unk >}$. We can also add reserved tokens like $\texttt{< pad >}$, $\texttt{< bos >}$, and $\texttt{< eos >}$ for padding, beginning of a sequence, and end of a sequence, respectively.

However, the vocabulary of a language model is typically limited to a fixed size due to computational constraints, which means that not all words can be included in the vocabulary. This leads to some serious issues!!

The Problem of Zeros

The issue of zeros in language models’ vocabulary arises from words in test data not present in training data, leading to out-of-vocabulary (OOV) tokens. These zeros underestimate the probability of various words, hurting performance and causing difficulties in handling completely unknown words. Even worse are the zeros for the unknown words that have never been seen by the model at all!

Possible Solutions for unkown words

- We work with a closed vocabulary assuming that we already know all the words that can occur.

- An open vocabulary is more practical than a closed vocabulary for most real-world scenarios. In an open vocabulary, words that haven’t been seen before are referred to as out of vocabulary (OOV) words. During training, any word that is not in the training set (an OOV word) is converted to the unknown word token

in a text normalization step. We estimate the probabilities for from its counts, treating it like any other regular word in the training set.

Smoothing

Zero probability can also pop up for words which are present in the vocabulary but appear in a different context in the test set. To avoid this, we shave off a bit of probability mass from some more frequent events and give it to the events we’ve never seen. This modification is called smoothing or discounting.

Laplace Smoothing: The basic idea behind Laplace smoothing is to add a small constant value (usually one) to each count in the frequency table before calculating probabilities which can help to avoid problems with zero counts.

Add-k smoothing: Add-k smoothing, also known as Lidstone smoothing, is a generalization of Laplace smoothing. Instead of adding a fixed constant (usually one) to all counts in a frequency table, we add a small value k to each count. This value k is usually a fraction between 0 and 1, and is often chosen using cross-validation or other optimization techniques.

Backoff: Backoff is a simple technique that involves estimating the probability of a sequence of words by looking at shorter subsequences of the same sequence. For example, to estimate the probability of the sentence “The cat sat on the mat”, we might start by looking at the probability of “The cat sat on” and “cat sat on the” and “sat on the mat”, and so on. If we do not have enough data to estimate the probability of a longer sequence, we “back off” to a shorter sequence and use that estimate instead.

Interpolation: Interpolation is a technique used to estimate the probability of a sequence of words by combining probability estimates from multiple sources or models. The idea is to combine these estimates in a way that takes into account their relative strengths and weaknesses.

For example, suppose we have two language models. One model estimates the probability of a word based on its frequency in the training data, while the other model estimates the probability of a word based on its position in the sentence.

To estimate the probability of a sentence using interpolation, we would first calculate the probability of each word in the sentence using both models. We would then combine these probabilities in a weighted average where the weights are hyperparameters of the model.

Kneser-Ney Smoothing

The basic idea behind Kneser-Ney Smoothing is to estimate the probability of a word given its context, rather than just its frequency. The Kneser-Ney smoothing algorithm is typically used with trigram or higher order N-grams, as these are less likely to be present in the training data. The algorithm consists of three main steps:

- Counting: Count the frequency of each N-gram in the training data.

- Discounting: Subtract a constant discount value from the count of each N-gram.

- Interpolation: Use a weighted combination of the discounted probability estimates for each N-gram to estimate the probability of a sequence of words.

The Kneser-Ney smoothing algorithm has been shown to outperform other smoothing techniques in many language modeling tasks, such as speech recognition and machine translation. It is also relatively efficient and can be trained on large datasets.

Evaluating language models

Conducting extrinsic evaluation by embedding a language model in an application is the most effective way to measure its performance. However, this approach can be costly. Intrinsic evaluation, which measures the quality of a model independent of any application, provides a quick and convenient metric to evaluate potential improvements. For an intrinsic evaluation of a language model we need a test set.

Perplexity

The perplexity (sometimes called PPL for short) of a language model on a test set is the inverse probability of the test set, normalized by the number of words.

\[\begin{equation} PP(W) = \sqrt[N]{\frac{1}{P(w_1w_2...w_N)}} = \sqrt[N]{\prod_{i=1}^{N}\frac{1}{P(w_i|w_{i-1}w_{i-2}...w_1)}} \end{equation}\]Because of the inverse, the higher the conditional probability of the word sequence, the lower the perplexity. Thus, minimizing perplexity is equivalent to maximizing the test set probability according to the language model.

Lexical Semantics

Lexical semantics is a subfield of linguistics that studies the meaning of words and how they relate to each other. It focuses on the analysis of the vocabulary and how words contribute to the meaning of a sentence. For example, if we look up for mouse in a dictionary, it might be defined as,

- any of numerous small rodents…

- a hand-operated device that controls a cursor…

Here the form mouse is the lemma, also called the citation form. The form mouse would also be the lemma for the word mice; dictionaries don’t have separate definitions for inflected forms like mice (called wordforms). Each lemma can have multiple meanings (polysemous); the lemma mouse can refer to the rodent or the cursor control device. We call each of these aspects of the meaning of mouse a word sense.

Synonymy: Two words are synonymous if they are substitutable for one another in any sentence without changing the truth conditions of the sentence, the situations in which the sentence would be true. We often say in this case that the two words have the same propositional meaning. Substitutions between some pairs of words like car / automobile are truth preserving, but the words are still not identical in meaning. In practice, the word syn- onym is therefore used to describe a relationship of approximate or rough synonymy.

Word similarity

Word similarity is a crucial component in semantic tasks like question answering, paraphrasing, and summarization. Although words may not have many synonyms, they often have numerous similar words. Understanding the degree of similarity between two words can aid in calculating the similarity of the meaning of two phrases or sentences.

Word relatedness

Word relatedness extends beyond similarity, often involving words belonging to the same semantic field. A semantic field comprises a set of words covering a specific domain and maintaining structured relations. For instance, words in the semantic fields of hospitals (surgeon, scalpel, nurse) or houses (door, roof, kitchen) exhibit relatedness.

Connotations

Words have affective meanings or connotations to mean the aspects of a word’s meaning that are related to a writer or reader’s emotions, sentiment, opinions, or evaluations. For example some words have positive connotations (happy) while others have negative connotations (sad). Positive or negative evaluation language is called sentiment.

Vector Semantics

Vector semantics, a standard NLP approach, represents word meaning as a point in a multidimensional semantic space based on word neighbor distributions. These vector representations, called embeddings, capture contextual patterns, assuming words in similar contexts have similar meanings.

Cosine simialrity

To measure similarity between two target words $v$ and $w$, we need a metric that takes two vectors (of the same dimensionality, either both with words as dimensions, or both with documents as dimensions) and gives a measure of their similarity. The cosine similarity is based on the dot product operator from linear algebra, also called the inner product:

\[\begin{equation} v \cdot w = \sum_{i=1}^{n} v_i w_i \end{equation}\]The dot product serves as a similarity metric, with high values for vectors sharing large values in the same dimensions. However, it favors longer vectors, causing frequent words to have higher raw dot products. By normalizing the dot product with vector lengths, the issue is resolved, yielding a metric equivalent to the cosine of the angle between the vectors.

\[\begin{equation} \text{cosine similarity}(v, w) = \frac{v \cdot w}{\lVert v \rVert \lVert w \rVert} = \frac{\sum_{i=1}^{n} v_i w_i}{\sqrt{\sum_{i=1}^{n} v_i^2} \sqrt{\sum_{i=1}^{n} w_i^2}} \end{equation}\]TF-IDF

The tf-idf weighting is the product of two terms, each term capturing one of the two intuitions:

-

The first is the term frequency: the frequency of the word $t$ in the document $d$.

\[tf_{t,d} = \text{count}(t,d)\]More generally, we use the log$_{10}$ of the frequency instead keeping in mind that a word appearing 100 times in a document doesn’t make that word 100 times more likely to be relevant to the meaning of the document.

-

The second factor in tf-idf is used to give a higher weight to words that occur only in a few documents. Terms that are limited to a few documents are useful for discriminating those documents from the rest of the collection. The document frequency df$_t$ of a term t is the number of documents it occurs in.

Document frequency is not the same as the collection frequency of a term, which is the total number of times the word appears in the whole collection in any document. The inverse document frequency (idf) is defined using the fraction \(\frac{N}{df_{t}}\), where $N$ is the total number of documents in the collection, and df$_t$ is the number of documents in which term t occurs. Because of the large number of documents in many collections, this measure too is usually squashed with a log function.

The tf-idf weighted value w$_{t,d}$ for word t in document d thus combines both the term frequency and the inverse document frequency.

\[\begin{equation} w_{t,d} = tf_{t,d} \times idf_t \end{equation}\]Pointwise mutual information (PMI)

Pointwise mutual information is one of the most important con- cepts in NLP. It is a measure of how often a target word $w$ and a context word $c$ occur, compared with what we would expect if they were independent:

\[\begin{equation} PMI(w,c) = \log_{2}\frac{P(w,c)}{P(w)P(c)} \end{equation}\]Word2Vec

Word2Vec was first introduced by Tomas Mikolov and his colleagues at Google in 2013

Dense vectors are superior to sparse vectors in NLP tasks, possibly due to the lower number of weights needed to represent words in a 300-dimensional space compared to a 50,000-dimensional space, which could aid in generalization and prevent overfitting. Dense vectors may also capture synonymy better, unlike sparse vectors which may fail to represent the similarity between words with distinct dimensions for synonyms.

Word2Vec relies on a binary classification task to learn embeddings, rather than counting co-occurrences. The idea is to train a classifier to predict whether a word is likely to appear near another word. The learned weights of the classifier are then used as embeddings. The innovative aspect of this approach is the use of running text as implicitly supervised training data, which avoids the need for hand-labeled supervision. This method is often referred to as self-supervision.

The Word2Vec algorithm consists of two models: the Continuous Bag-of-Words (CBOW) model and the Skip-gram model. The CBOW model predicts a target word based on its context (surrounding words), while the Skip-gram model predicts the context words given a target word. Both models learn to predict words based on the distributional hypothesis, which states that words that appear in similar contexts tend to have similar meanings.

Skip-gram

The intuition of skip-gram is:

- Treat the target word and a neighboring context word as positive examples.

- Randomly sample other words in the lexicon to get negative samples.

- Use logistic regression to train a classifier to distinguish those two cases.

- Use the learned weights as the embeddings.

Word2vec embeddings are static embeddings, meaning that the method learns one fixed embedding for each word in the vocabulary. There exist dynamic contextual embeddings like the popular family of BERT representations, in which the vector for each word is different in different contexts. I will get back to embeddings in a lot more details later.

Sequence Labeling

Tasks in which we assign, to each word $x_i$ in an input word sequence, a label $y_i$, so that the output sequence Y has the same length as the input sequence X are called sequence labeling tasks.

Part-of-speech tagging

Part-of-speech tagging is the process of assigning a part-of-speech to each word in a text. The input is a sequence $x_1,x_2,\dots,x_n$ of (tokenized) words and a target, and the output is a sequence $y_1,y_2,\ldots,y_n$ of tags, each output $y_i$ corresponding exactly to one input $x_i$.

The difficulty lies in the fact that tagging is a disambiguation task; words are ambiguous — have more than one possible part-of-speech—and the goal is to find the correct tag for the situation.

Named Entity Tagging

A named entity is, roughly speaking, anything that can be referred to with a proper name: a person, a location, an organization. The task of named entity recognition (NER) is to find spans of text that constitute proper names and tag the type of the entity. Four entity tags are most common: PER (person), LOC (location), ORG (organization), or GPE (geo-political entity).

Named entity tagging is a crucial step in many natural language processing tasks. It helps to identify entities such as people, organizations, and locations in text, which is useful for tasks like sentiment analysis, question answering, and building semantic representations. It also facilitates linking text to structured knowledge sources like Wikipedia.

Hidden markov models (HMM)

An HMM is a probabilistic sequence model: given a sequence of units (words, letters, sentences, whatever), it computes a probability distribution over possible sequences of labels and chooses the best label sequence. The HMM is based on augmenting the Markov chain. A markov chain is a type of stochastic process that assumes that the future state of the system depends only on the current state and not on any states that occurred before it.

Formally, a Markov chain is specified by the following components:

- Q = $q_1q_2\ldots q_N$ - a set of N states

- A = $a_{11}a_{12}\ldots a_{N1}\ldots a_{NN}$ - a transition probability matrix A, each a$_{ij}$ represent a probability of moving from state i to j s.t. \(\sum_{j=1}^{n}a_{ij} = 1 \forall i\)

- $\pi = \pi_1pi_2\ldots \pi_N$ - an initial probability distribution over states. $\pi_{i}$ is the probability that the Markov chain will start in state i. Also \(\sum_{i=1}^{n}\pi_{i} = 1\)

On the other hand, an HMM is specified by the following components:

- Q = $q_1q_2\ldots q_N$ - a set of N states

- A = $a_{11}a_{12}\ldots a_{N1}\ldots a_{NN}$ - a transition probability matrix A, each a$_{ij}$ represent a probability of moving from state i to j s.t. \(\sum_{j=1}^{n}a_{ij} = 1 \forall i\)

- O = $o_1o_2\ldots o_T$ - a sequence of T observations, each one drawn from a vocabulary V = $v_1v_2\ldots v_V$

- B = $b_i(o_t)$ - a sequence of observation likelihoods, also called emission probabilities, each expressing the probability of an observation $o_t$ being generated from a state $q_i$

- $\pi = \pi_1pi_2\ldots \pi_N$ - an initial probability distribution over states. $\pi_{i}$ is the probability that the Markov chain will start in state i. Also \(\sum_{i=1}^{n}\pi_{i} = 1\)

For models like HMM, that contains hidden variables, the task of determining the hidden variables sequence corresponding to the sequence of observations is called decoding. More formally,

- Decoding: Given as input an HMM $\lambda$ = (A, B) and a sequence of observations $O = o_1 , o_2 ,\ldots, o_T$, find the most probable sequence of states $ Q = q_1q_2q_3\ldots q_T $.

For part-of-speech tagging, the goal of HMM decoding is to choose the tag sequence $t_1\ldots t_n$ that is most probable given the observation sequence of n words $w_1\ldots w_n$.

\[\begin{equation} \hat{t}_{1:n} = \underset{t_1\ldots t_n}{\text{argmax}}P(t_1\ldots t_n|w_1\ldots w_n) \end{equation}\]We can use the Bayes’ rule to compute:

\[\begin{equation} \hat{t}_{1:n} = \underset{t_1\ldots t_n}{\text{argmax}}\frac{P(w_1\ldots w_n|t_1\ldots t_n)P(t_1\ldots t_n)}{P(w_1\ldots w_n)} \end{equation}\]The denominator can be dropped (a constant). On top of that, HMM taggers make two further simplifying assumptions.

- The first is that the probability of a word appearing depends only on its own tag and is independent of neighboring words and tags:

The second assumption, the bigram assumption, is that the probability of a tag is dependent only on the previous tag, rather than the entire tag sequence;

\[\begin{equation} P(t_1\ldots t_n) \approx \prod_{i=1}^{n}P(t_{i}|t_{i-1}) \end{equation}\]Plugging it back all together

\[\begin{equation} \hat{t}_{1:n} = \underset{t_1\ldots t_n}{\text{argmax}}P(w_1\ldots w_n|t_1\ldots t_n) \approx \underset{t_1\ldots t_n}{\text{argmax}}\prod_{i}^{n}P(w_{i}|t_{i})P(t_{i}|t_{i-1}) \end{equation}\]The two parts correspond to the emission probability B and transition probability A that we just defined above!

The Viterbi algorithm

The decoding algorithm for HMMs is the Viterbi algorithm which is a dynamic programming algorithm. The Viterbi algorithm works as follows:

- Initialization: For each state in the HMM, calculate the probability of starting in that state and emitting the first observation from the sequence.

- Recursion: Iterate through the remaining observations in the sequence. For each observation, calculate the probability of transitioning to a new state, given the previous state probabilities and the current observation. Keep track of the most likely path to reach each state at the current observation.

- Termination: Identify the most likely final state, and then backtrack through the stored paths to find the most likely sequence of hidden states that generated the observations.

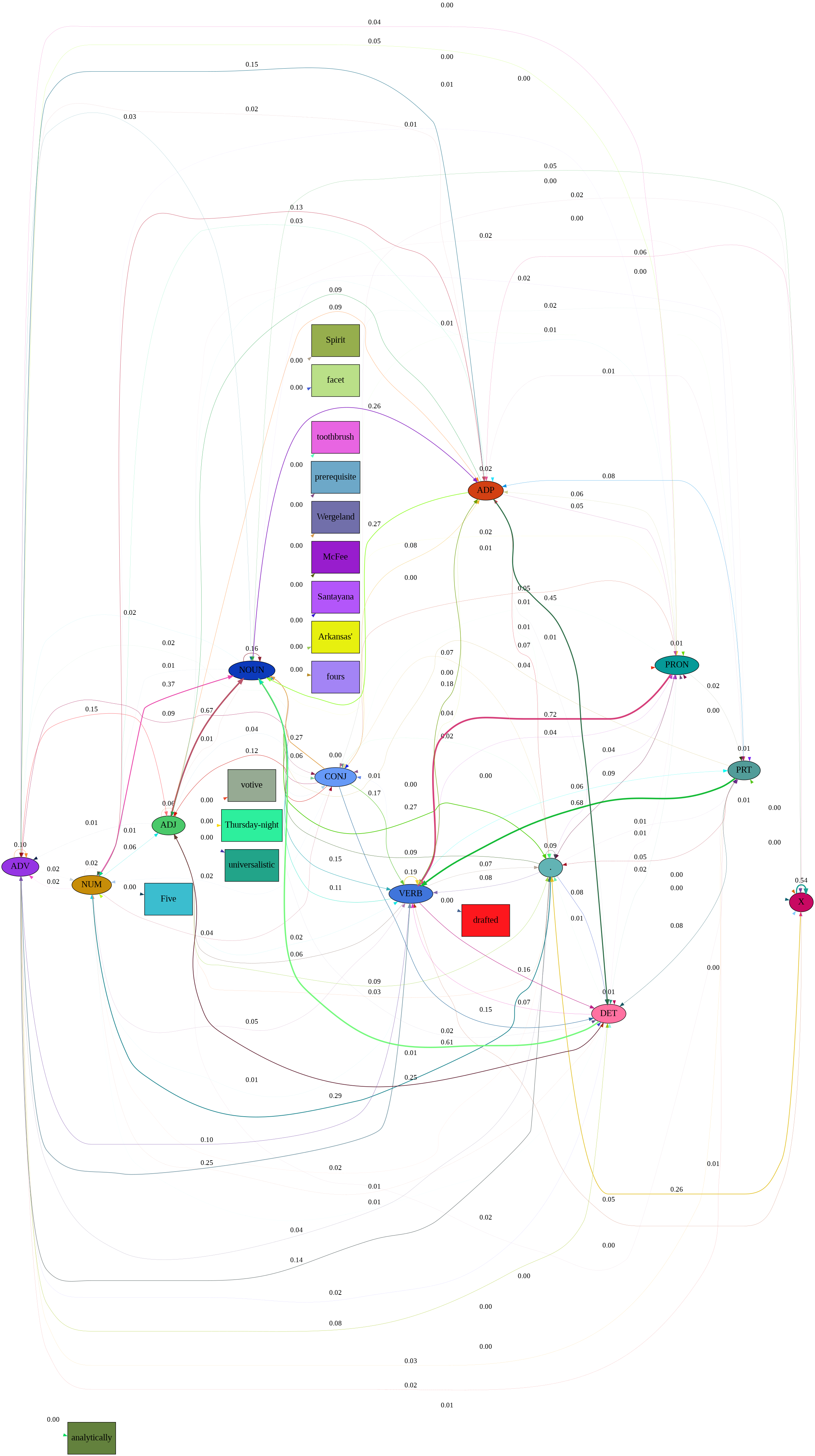

Below is a python implemetation of a Hidden Markov model and the Viterbi algorithm as well as the visualization of the model graph.

import numpy as np

from collections import defaultdict

from nltk.corpus import brown

class HiddenMarkovModel:

def __init__(self):

# Initialize sets for tags and words

self.tags = set()

self.words = set()

# Initialize dictionaries for transition and emission probabilities

self.transition_probs = defaultdict(lambda: defaultdict(float))

self.emission_probs = defaultdict(lambda: defaultdict(float))

def train(self, tagged_sentences):

# Initialize dictionaries for tag counts and word counts per tag

tag_counts = defaultdict(int)

tag_word_counts = defaultdict(lambda: defaultdict(int))

# Iterate over sentences and their corresponding tags in the dataset

for sentence in tagged_sentences:

prev_tag = None

# Iterate over words and tags in a sentence

for word, tag in sentence:

self.words.add(word)

self.tags.add(tag)

# Update tag counts and word counts per tag

tag_counts[tag] += 1

tag_word_counts[tag][word] += 1

# Update transition probabilities

if prev_tag:

self.transition_probs[prev_tag][tag] += 1

prev_tag = tag

# Calculate transition probabilities

for tag in self.tags:

for next_tag in self.tags:

self.transition_probs[tag][next_tag] /= tag_counts[tag]

# Calculate emission probabilities

for word in self.words:

self.emission_probs[tag][word] = tag_word_counts[tag][word] / tag_counts[tag]

def viterbi(self, sentence):

n = len(sentence)

m = len(self.tags)

tags_list = list(self.tags)

# Initialize dynamic programming table and backpointers table

dp = np.zeros((n, m))

backpointers = np.zeros((n, m), dtype=int)

# Fill in the first row of the dynamic programming table

for i, tag in enumerate(tags_list):

dp[0][i] = self.transition_probs['<START>'][tag] * self.emission_probs[tag][sentence[0]]

# Iterate through the rest of the sentence

for t in range(1, n):

for i, tag in enumerate(tags_list):

max_prob, max_idx = 0, 0

# Iterate over previous tags

for j, prev_tag in enumerate(tags_list):

prob = dp[t - 1][j] * self.transition_probs[prev_tag][tag] * self.emission_probs[tag][sentence[t]]

if prob > max_prob:

max_prob = prob

max_idx = j

# Update the dynamic programming table and backpointers table

dp[t][i] = max_prob

backpointers[t][i] = max_idx

# Backtrack to find the best sequence of tags

best_tags = []

best_last_tag = np.argmax(dp[n - 1])

best_tags.append(tags_list[best_last_tag])

for i in range(n - 1, 0, -1):

best_prev_tag = backpointers[i][best_last_tag]

best_tags.append(tags_list[best_prev_tag])

best_last_tag = best_prev_tag

return list(reversed(best_tags))

Conditional Random Fields (CRF)

Assuming we have a sequence of input words X = $x_1 \ldots x_n$ and want to compute a sequence of output tags Y = $y_1 \ldots y_n$. In an HMM to compute the best tag sequence that maximizes $P(Y|X)$ we rely on Bayes’ rule and the likelihood $P(X|Y)$:

\[\begin{align*} \hat{Y} = &\underset{Y}{\text{argmax}}P(Y|X)& \\ &\underset{Y}{\text{argmax}}P(X|Y)P(Y)& \\ &\underset{Y}{\text{argmax}}\prod_{i}^{n}P(x_i|y_i)\prod_{i}^{n}P(y_i|y_{i-1})& \end{align*}\]In a CRF, by contrast, posterior $P(Y|X)$ is calculated directly. A CRF is a log-linear model that assigns a probability to an entire output (tag) sequence Y , out of all possible sequences $\mathcal{Y}$, given the entire input (word) sequence X. The key component of a linear-chain CRF is the feature function, which captures the relationships between the input sequence, label sequence, and the position within the sequence. Let’s denote $f_k(y_{i-1}, y_i, X, i)$ as a feature function, where:

- k: Index of the feature function

- $y_{i-1}$: Previous label

- $y_i$: Current label

- X: Input sequence

- i: Position in the sequence

CRFs define the conditional probability of a label sequence Y given an input sequence X as: \(\begin{align*} P(Y|X) &= \frac{1}{Z(X)} \exp \left( \sum_{i=1}^n \sum_{k=1}^K \lambda_k f_k(y_{i-1}, y_i, X, i) \right) \\ Z(X) &= \sum_Y \exp \left( \sum_{i=1}^n \sum_{k=1}^K \lambda_k f_k(y_{i-1}, y_i, X, i) \right) \end{align*}\)

Here,

- n: Length of the input sequence

- K: Total number of feature functions

- $\lambda_k$: Weight associated with the k$^{th}$ feature function

- Z(X): Partition function, defined as the sum of the probabilities of all possible label sequences for the input sequence X. It ensures that the probabilities sum to 1.

The main goal of training a CRF is to learn the optimal weights ($\lambda_k$) for each feature function to maximize the conditional probability of the correct label sequence given the input sequence. Training is usually performed using maximum likelihood estimation, which involves maximizing the log-likelihood of the observed data with respect to the model parameters ($\lambda_k$). This optimization can be done using various algorithms, such as gradient-based methods (e.g., L-BFGS, stochastic gradient descent) or expectation-maximization (EM).

Once the model is trained, we can use the Viterbi algorithm or other inference techniques to find the most likely label sequence given a new input sequence.

I believe I have covered the essential building blocks of language models and various methods for representing and structuring human languages, enabling computers to effectively and seamlessly understand, interpret, generate, and interact with human language. I intentionally omitted neural language models in this discussion, as I plan to explore them in-depth from scratch in my next post.

In writing this blog, I’ve extensively followed Dan Jurafsky’s book on language models

Lastly, I’ve included a Jupyter notebook in which I’ve implemented some of the core methods discussed above using Python, providing a practical understanding of how these techniques work.