Deep Learning for Speech recognition

Overview of speech processing research- Part 1.

With the emergence of deep learning, the domain of speech processing has experienced a revolutionary change. The implementation of multilayered processing techniques has led to the development of models that can extract sophisticated features from speech-related data. This progression has fostered extraordinary advancements in several fields including speech recognition, text-to-speech synthesis, automatic speech recognition, and emotion detection, thereby enhancing the efficacy of these tasks like never before. The potential of deep learning methods has introduced novel research opportunities and innovative prospects in speech processing, holding vast implications for a variety of industries and applications.

In this article, I will be talking about the basics of speech signals, speech synthesis, and automatic speech signals.

Speech sound waves

The initial phase in speech processing involves transforming analog signals (initially air pressure, and subsequently analog electrical signals in a microphone) into a digital signal. This analog-to-digital conversion process is a two-step procedure, sampling and quantization. Sampling is the process where the signal’s amplitude is measured at a specific moment; the sampling rate refers to the count of samples obtained per second.

To measure a wave accurately, we need at least two samples per cycle: one for the positive part and another for the negative part. Having more than two samples improves amplitude accuracy, but having less can entirely miss the wave’s frequency. The highest frequency measurable, termed the Nyquist frequency, is half the sample rate, as each cycle requires two samples.

For sound waves, efficient storage is critical. For example, an 8000 Hz sampling rate necessitates 8000 amplitude measurements per second of speech. Typically, these are stored as 8-bit or 16-bit integers. The process of mapping real numbers to integers is known as quantization, where there’s a minimum granularity (quantum size), and values closer than this size are represented identically.

The last consideration is whether to store each sample linearly or to compress it. A popular compression format for telephone speech is μ-law. It leverages the idea that human hearing is more sensitive to small intensities; hence, it represents smaller values more accurately, while allowing more error on larger values.

Frequency and amplitude

The fundamental frequency, often referred to as the “first harmonic” or “natural frequency,” is the lowest frequency in a periodic waveform. It’s the most significant frequency component of a complex waveform and is often the frequency that is perceived as the pitch of a sound in music or speech.

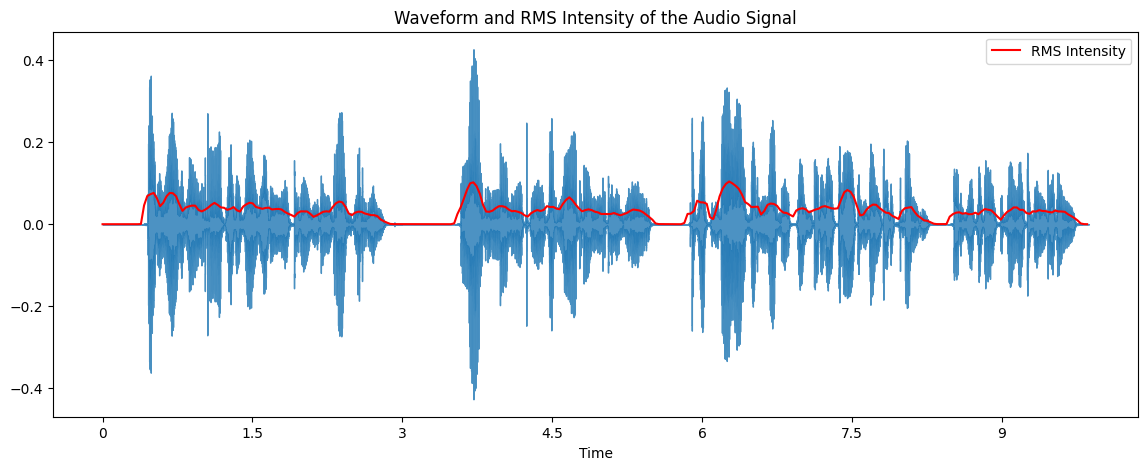

Root Mean Square (RMS) amplitude is an “average” measure of a sound wave’s magnitude. It quantifies the energy or loudness of the sound. If we have a waveform described by a set of n samples x[i] (where i ranges from 1 to n), the RMS amplitude (A_rms) is calculated as:

\[\begin{align} A_{rms} = \sqrt{\frac{1}{n}\sum_{i=1}^{n}(x[i])^2} \end{align}\]The power of the signal is related to the square of the amplitude. If the number of samples of a sound is N, the power is

\[\begin{align} Power = \frac{1}{n}\sum_{i=1}^{n}(x[i])^2 \end{align}\]Rather than power, we more often refer to the intensity of the sound, which normalizes the power to the human auditory threshold, and is measured in dB. If $P_0$ is the auditory threshold pressure = $2 \times 10^{-5} Pa$ then intensity is defined as follows:

\[\begin{align} Intensity = 10 \cdot \log_{10} \left( \frac{I}{I_0} \right) \end{align}\]Roughly speaking, human pitch perception is most accurate between 100Hz and 1000Hz, and in this range pitch correlates linearly with frequency. Human hearing represents frequencies above 1000 Hz less accurately and above this range pitch correlates logarithmically with frequency. Logarithmic representation means that the differences between high frequencies are compressed, and hence not as accurately perceived. There are various psychoacoustic models of pitch perception scales. One common model is the mel scale

The inverse transformation from the Mel scale back to the frequency domain is given by:

\[\begin{align} f = 700 \cdot (e^{\frac{m}{1127}} - 1) \end{align}\]

Automatic Speech Recognition

Automatic Speech Recognition (ASR) and Automatic Speech Understanding (ASU) are two critical areas in the field of speech processing and natural language processing.

-

Automatic Speech Recognition (ASR): ASR is the process by which a computer system recognizes spoken language. It’s a technology that converts spoken language into written text. ASR systems have a wide range of applications, including transcription services, voice assistants like Alexa and Siri, and many more. These systems typically involve complex algorithms for acoustic and language modeling.

-

Automatic Speech Understanding (ASU): ASU goes a step beyond ASR. While ASR focuses on transcribing the spoken words into text, ASU is about interpreting the meaning of the spoken words. It aims to understand the semantics and context of the speech. ASU is crucial for tasks that require natural language understanding, like conversational AI, where the system needs to understand user queries and respond appropriately.

The complexity of speech recognition tasks varies with vocabulary size. Tasks involving a small vocabulary, such as detecting “yes” or “no” or recognizing digit sequences, are relatively straightforward. However, tasks like transcribing phone conversations or broadcast news, which can involve vocabularies exceeding 64,000 words, are considerably more challenging.

Speech recognition complexity also depends on the nature of the speech. Isolated word recognition is simpler than continuous speech recognition where words must be segmented. Tasks involving human-to-machine speech, like read speech or dialogues with speech systems, are easier compared to human-to-human conversations. This is because people tend to speak slower and clearer when interacting with machines, making the speech easier to recognize.

A third dimension of variation lies in the audio quality and noise level. Recognition is easier with high-quality head-mounted microphones and in quiet environments, compared to noisy surroundings. Furthermore, recognizing speech is simpler when the speaker’s dialect matches the system’s training data. Therefore, non-standard or foreign accents, or children’s speech, can pose challenges unless the system was specifically trained on such speech.

In this article, I will mostly focus on Large-Vocabulary Continuous Speech Recognition (LVCSR) using Hidden Markov Models (HMM). Large-vocabulary generally means that the systems have a vocabulary of roughly 20,000 to 60,000 words. It is a multi-step process and we will go through it step by step.

Feature extraction: MFCC Vectors

This section outlines the conversion of the input waveform into a sequence of acoustic feature vectors, particularly using Mel Frequency Cepstral Coefficients (MFCCs), a common method in speech recognition grounded on the cepstrum principle. It can be broken down into the following steps:

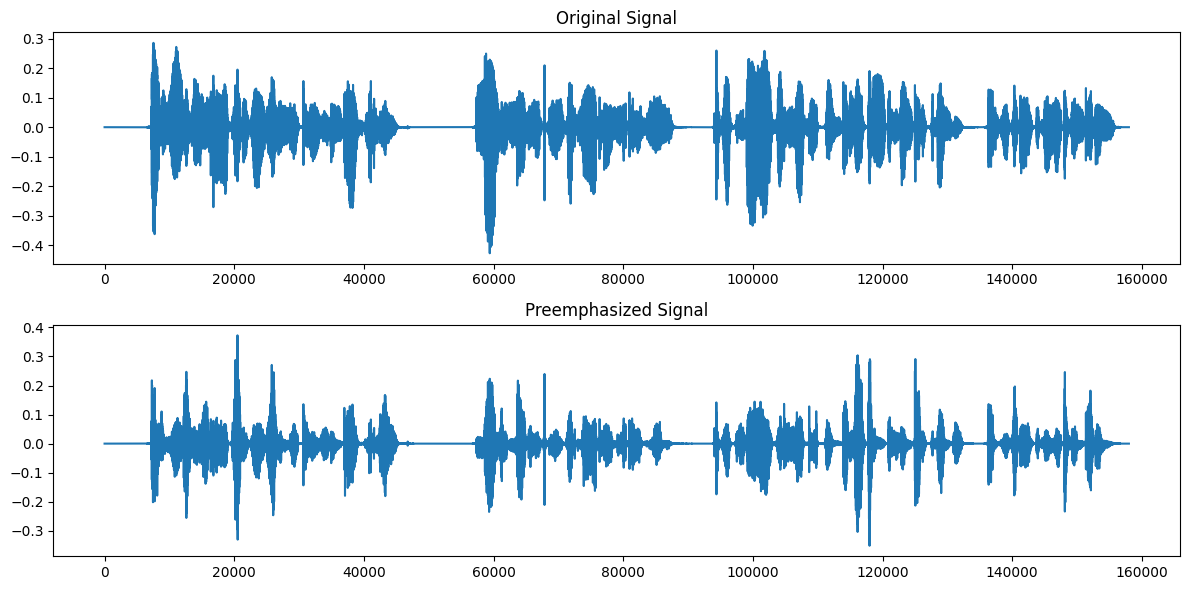

Preemphasis

Preemphasis is used to compensate for the high-frequency part that is usually suppressed during the human speech production mechanism. The preemphasis filter emphasizes higher frequencies by applying a first-order filter to increase the energy of the signal at higher frequencies. This can improve the balance between the noise and information components of the speech signal, which can lead to better recognition results. In practice, preemphasis is often realized by the following first order difference equation:

\[\begin{align} y[n] = x[n] - \alpha x[n-1] \end{align}\]where:

- y[n] is the output signal (preemphasized signal)

- x[n] and x[n-1] are the current and previous input signals, respectively

- $\alpha$ is the preemphasis coefficient, usually set close to 1 (a typical value is 0.97)

Windowing

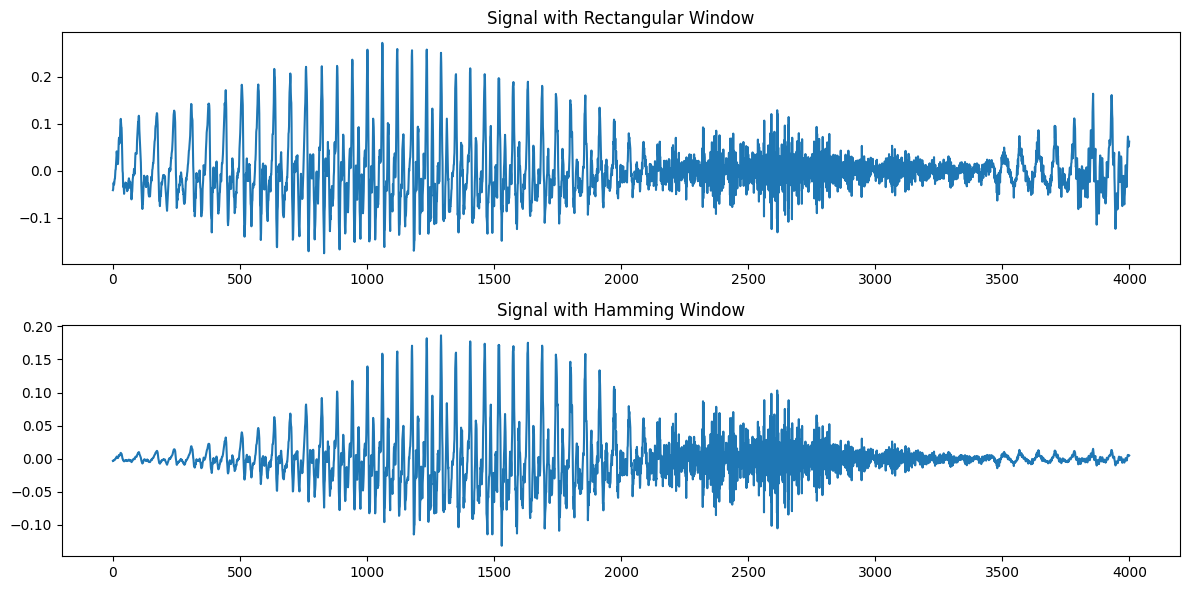

Speech is a non-stationary signal, implying that its statistical features vary over time. Hence, spectral features are typically extracted from small speech windows that represent specific subphones. Within these small regions, the signal can be approximately considered stationary, meaning its statistical properties remain constant.

Windowing in speech processing is determined by three parameters: window width (milliseconds), offset between consecutive windows, and window shape. Each window extracts a “frame” of speech, with the frame size indicating its millisecond duration and the frame shift referring to the millisecond gap between starting edges of successive windows.

Two windows are most commonly used. The rectangular window, the simplest windowing technique, can lead to issues due to its abrupt signal truncation at boundaries. This discontinuity hampers Fourier analysis, hence the Hamming window is often preferred in MFCC extraction as it tapers the signal values towards zero at the window edges, thereby circumventing discontinuities.

\[\begin{align} w[n] &= \begin{cases} 1, & 0 \leq n \leq N-1 \\ 0, & \text{otherwise} \end{cases} \\ w[n] &= \begin{cases} 0.54 - 0.46\cos\left(\frac{2\pi n}{N-1}\right), & 0 \leq n \leq N-1 \\ 0, & \text{otherwise} \end{cases} \end{align}\]

Discrete Fourier Transform (DFT)

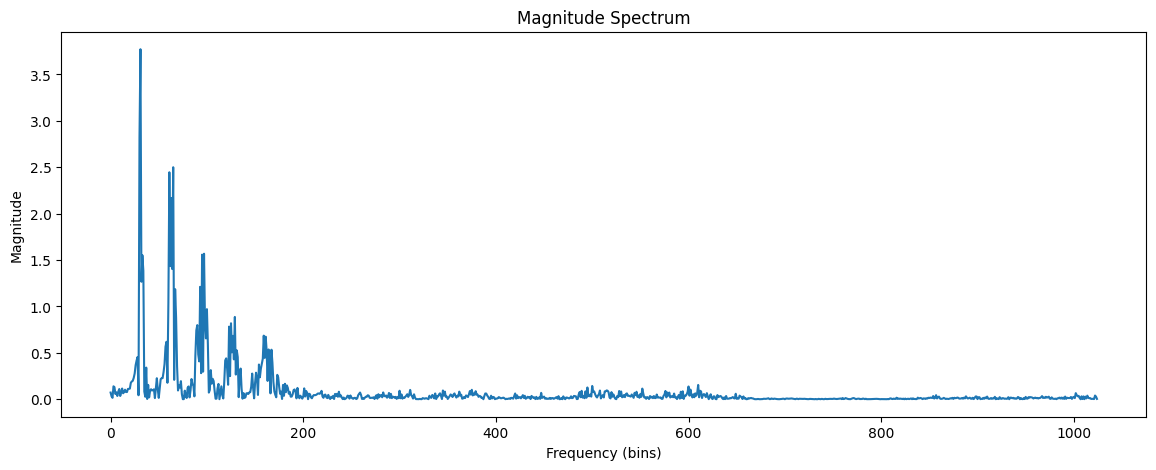

The subsequent step in the process of speech recognition involves deducing the spectral data from the windowed signal. The aim here is to determine the distribution of signal energy across various frequency bands. For a discrete-time signal, the Discrete Fourier Transform (DFT) is the method employed to extract this spectral information for distinct frequency bands.

The input to the DFT is a windowed signal x[n]…x[m], and the output, for each of N discrete frequency bands, is a complex number X [k] representing the magnitude and phase of that frequency component in the original signal.

\[\begin{align} X[k] = \sum_{n=0}^{N-1} x[n] \cdot e^{-\frac{2\pi i}{N} nk} \end{align}\]A commonly used algorithm for computing the DFT is the Fast Fourier Transform or FFT. This implementation of the DFT is very efficient, but only works for values of N which are powers of two.

Mel filter bank and log

The outcome of the Fast Fourier Transform (FFT) offers insight into the energy distribution across different frequency bands. Nevertheless, human hearing doesn’t respond identically across all frequencies. In Mel Frequency Cepstral Coefficients (MFCCs), this is taken into account by mapping the FFT output frequencies onto the mel scale. This warping approach considers human auditory perceptions. During the computation of MFCCs, this principle is implemented using a set of filters that gather energy from each frequency band. Below 1000 Hz, ten filters are distributed linearly, while above 1000 Hz, the remaining filters are distributed on a logarithmic scale.

In the final step, a logarithm is applied to each value of the Mel spectrum. This step reflects the logarithmic response of human perception to signal level. At higher amplitudes, humans tend to be less aware of minor amplitude differences compared to their sensitivity at lower amplitudes. In addition, using a log makes the feature estimates less sensitive to variations in input (for example power variations due to the speaker’s mouth moving closer or further from the microphone).

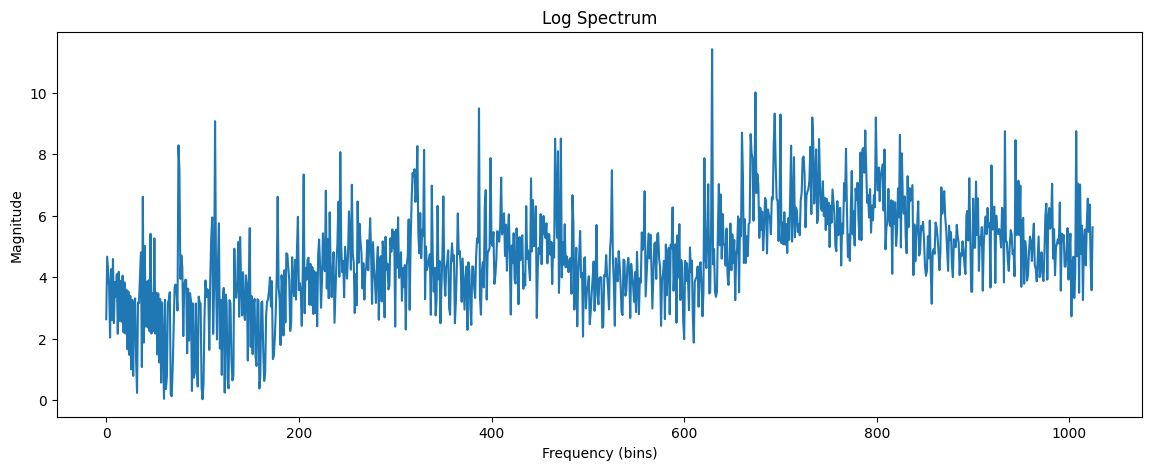

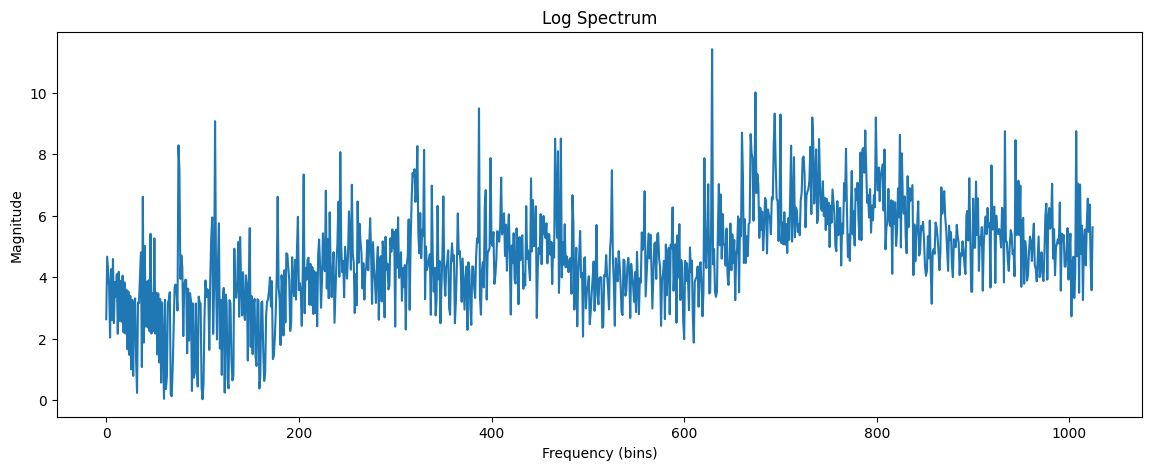

The Cepstrum: Inverse Discrete Fourier Transform

The cepstrum can be thought of as the spectrum of the log of the spectrum. This may sound confusing. But let’s begin with the easy part: the log of the spectrum. That is, the cepstrum begins with a standard magnitude spectrum. The next step is to visualize the log-spectrum as if itself were a waveform (see fig. 5). The log-spectrum of a signal exhibits rich characteristics. It generates a largely continuous signal, mainly due to the smoothing effect of windowing. A prominent feature is its periodic structure, which mirrors the harmonic structure of the audio signal, driven by the fundamental frequency. Additionally, by linking the harmonic structure’s peaks, we observe macro-level features known as formants, resonances of the vocal tract. These formants play a crucial role in vowel identification, making their extraction vital. Hence, quantifying these macro-level structures is key due to their association with vocal identity.

Mathematically, the cepstrum is more formally defined as the inverse DFT of the log magnitude of the DFT of a signal, hence for a windowed frame of speech x[n]:

\[\begin{align} c[n] = \frac{1}{N}\sum_{k=0}^{N-1} \left( \log\left| \sum_{n=0}^{N-1} x[n] \cdot \exp\left(-\frac{2\pi i km}{N}\right) \right|^2 \right) \cdot \exp\left(\frac{2\pi i kn}{N}\right) \end{align}\]In MFCC extraction, typically only the first 12 cepstral coefficients are used. These coefficients provide specific information about the vocal tract filter, distinctly separated from details related to the glottal source.

Deltas and Energy

The extraction of the cepstrum via the Inverse DFT from the previous section results in 12 cepstral coefficients for each frame. We next add a thirteenth feature: the energy from the frame. Energy correlates with phone identity and so is a useful cue for phone detection (vowels and sibilants have more energy than stops, etc). The energy in a frame is the sum over time of the power of the samples in the frame; thus for a signal x in a window from time sample $t_1$ to time sample $t_2$, the energy is:

\[\begin{align} Energy = \sum_{t=t_1}^{t_2}x^2[t] \end{align}\]Also speech signals vary from frame to frame, and this variation can offer useful cues for phone identification. Therefore, along with the 13 features (12 cepstral features plus energy), delta or velocity features, and double delta or acceleration features are also included. These delta and double delta features represent the changes between frames in the corresponding cepstral/energy features and delta features respectively.

A simple way to compute deltas would be just to compute the difference between frames; thus the delta value d(t) for a particular cepstral value c(t) at time t can be estimated as:

\[\begin{equation} d(t) = \frac{c(t+1) - c(t-1)}{2} \end{equation}\]MFCC

By including energy, as well as delta and double-delta features to the original 12 cepstral features, a total of 39 MFCC features are created.

- 12 cepstral coefficients

- 12 delta cepstral coefficients

- 12 double delta cepstral coefficients

- 1 energy coefficient

- 1 delta energy coefficient

- 1 double delta energy coefficient