Optimization & Regularization - The Power Duo!

The fundamentals of deep learning... continued.

Learning with momentum.

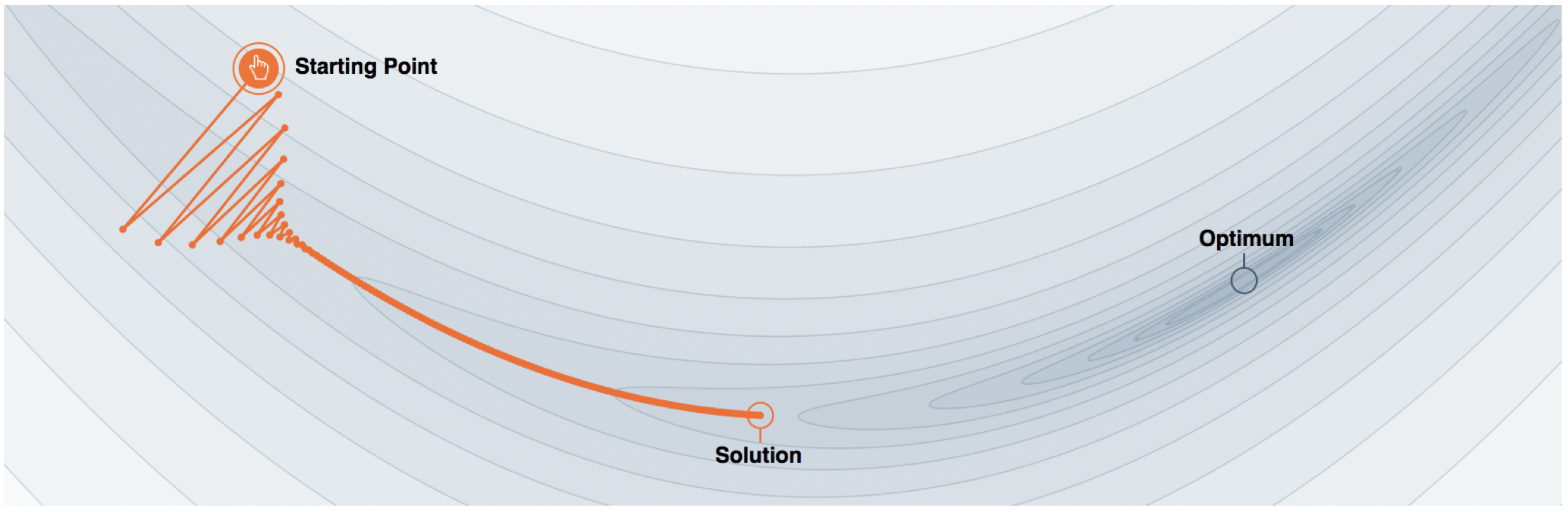

Moving in the steepest descent direction is a greedy approach that only considers current information and ignores past and future information, leading to oscillations, instability, and slow convergence. This is especially problematic when the objective function is disproportionately scaled, resulting in “ravines” in the function landscape, causing the algorithm to make large leaps in contracted directions and slow progress along elongated directions, leading to oscillations and slow or no convergence to the optimal point.

A popular story about momentum goes as follows: gradient descent is a man walking down a hill. He follows the steepest path downwards; his progress is slow, but steady. Momentum is a heavy ball rolling down the same hill. The added inertia acts both as a smoother and an accelerator, dampening oscillations and causing us to barrel through narrow valleys, small humps and local minima.

Polyak’s Momentum (Heavy ball method)

Momentum gradient descent, or the heavy ball algorithm was first proposed in the 60s

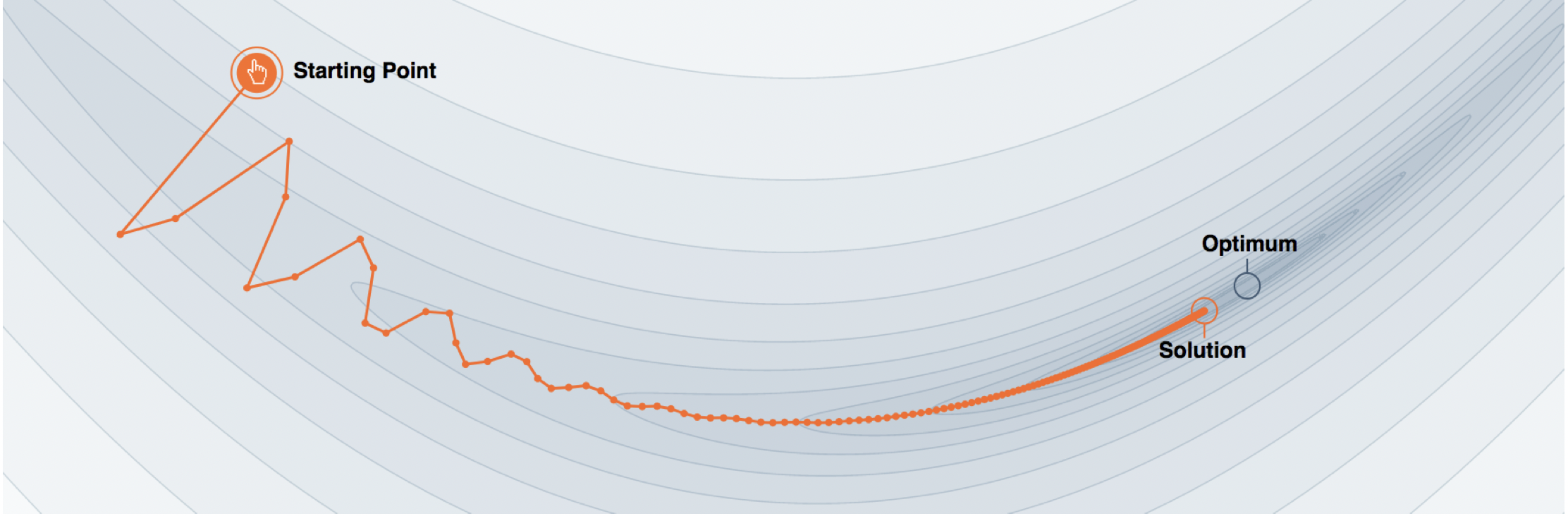

The same concept applies to neural networks, during training the update direction tends to resist change when momentum is added to the update scheme. When the neural net approaches a shallow local minimum it’s like applying brakes but not sufficient to instantly affect the update direction and magnitude. Hence the neural nets trained this way will overshoot past smaller local minima points and only stop in a deeper global minimum.

If we imagine the current iterate as an object with unit mass, then our gradient descent update should be proportional to the previous step size. The full momentum update is:

\[\begin{eqnarray} & v_{t} & \leftarrow \beta v_{t-1} - \alpha \nabla f(\theta_{t}) \\ & \theta_{t+1} & \leftarrow \theta_{t} + v_{t} \nonumber \end{eqnarray}\]or can be combined into a single equation

\[\begin{equation} \theta_{t+1} \leftarrow \theta_{t} - \alpha \nabla f(\theta_{t}) + \beta(\theta_{t} - \theta_{t-1}) \end{equation}\]where \(\beta\) is a hyperparameter (typically \(\beta \in [0,1]\), although not limited to it), which scales down the previous step. Adding this scaled previous step controls oscillation and in low curvatures causes acceleration along the same direction. The overall effect is that it allows the step size \(\alpha\) to be larger and decreases the number of steps to convergence.

In terms of physical analogy, there are two kinds of forces: One force is proportional to the negative gradient of the cost function: \(- \nabla_{\theta} J(\theta)\). This force pushes the particle downhill along the cost function surface. The other force is the viscous drag, proportional to \(- v(t)\). This causes the particle to gradually lose energy over time and eventually converge to a local minimum or else it will keep oscillating back and forth around a valley forever.

In simple gradient descent, the size of the step was simply the norm of the gradient multiplied by the learning rate. Now, the size of the step depends on how large and how aligned a sequence of gradients are. The step size is largest when many successive gradients point in exactly the same direction. If the momentum algorithm always observes gradient g, then it will accelerate in the direction of −g, until reaching a terminal velocity where the size of each step is

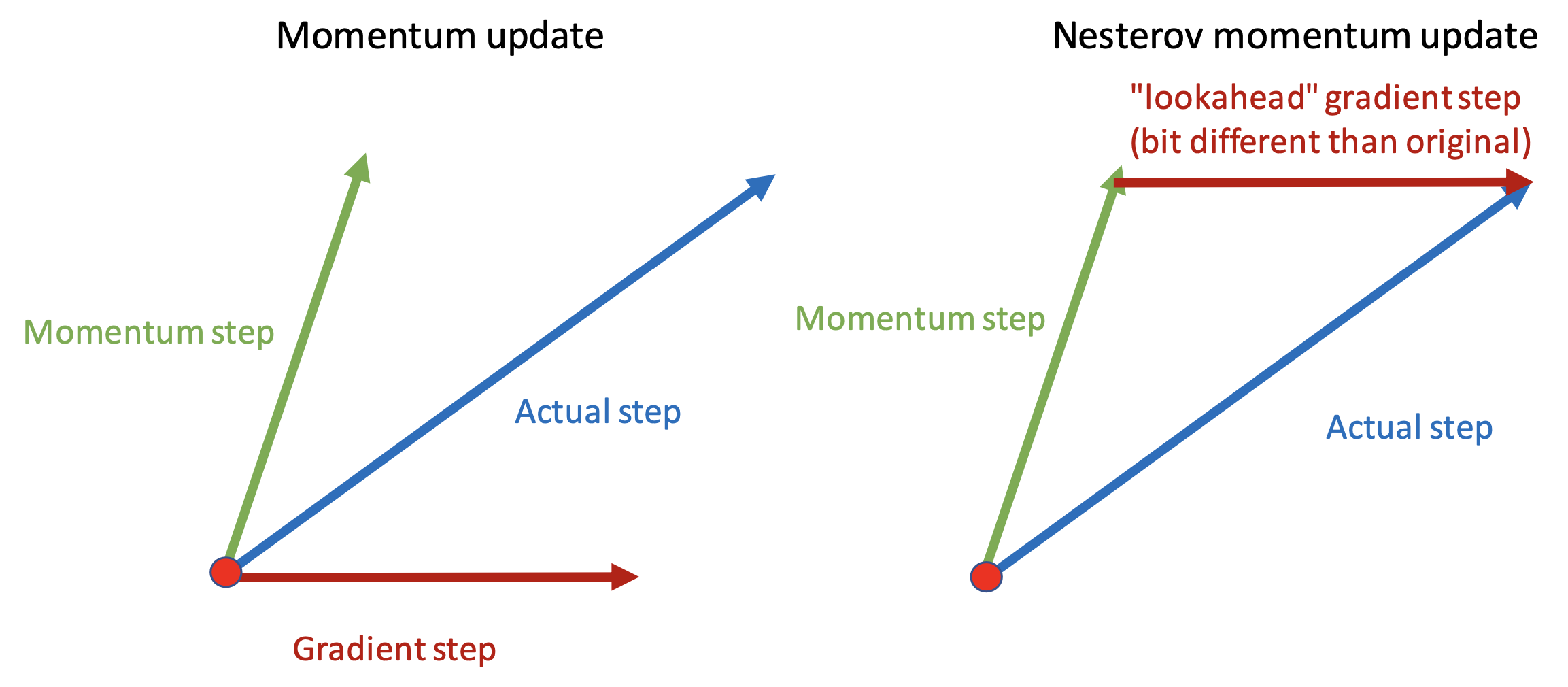

\[\frac{\alpha ||g||}{1 - \beta}\]Nesterov Momentum

Nesterov momentum, also known as Nesterov accelerated gradient (NAG), is a variant of the momentum gradient descent. It was introduced by Yurii Nesterov in 1983

Adaptive Learning Rates

Neural network researchers have long realized that the learning rate was reliably one of the hyperparameters that is the most difficult to set because it has a significant impact on model performance. The cost is often highly sensitive to some directions in parameter space and insensitive to others. The momentum algorithm can mitigate these issues somewhat, but does so at the expense of introducing another hyperparameter. As such, it is quite natural to look for other ways.

Adagrad

The AdaGrad algorithm, individually adapts the learning rates of all model parameters by scaling them inversely proportional to the square root of the sum of their historical squared values

$$ \begin{equation} \theta_{t+1} = \theta_{t} - \frac{\eta}{\sqrt{G_{t} + \epsilon}}\odot g_{t} \end{equation}

where \(G_{t} \in \mathbb{R}^{d X d}\) is a diagonal matrix where each diagonal element i,i is the sum of the squares of the gradients w.r.t. \(\theta_{i}\) upto time step t, , while \(\epsilon\) is a smoothing term that avoids division by zero (usually on the order of 1e-8).

One of Adagrad’s main benefits is that it eliminates the need to manually tune the learning rate. Most implementations use a default value of 0.01 and leave it at that. On the other hand, Adagrad accumulates squared gradients causing the learning rate to shrink and eventually stop learning. Newer algorithms aim to solve this problem.

RMSProp

The RMSProp algorithm

Adam (Adaptive Moment Estimation)

Adam

\(m_{t}\) and \(v_{t}\) are estimates of the first moment (the mean) and the second moment (the uncentered variance) of the gradients respectively, hence the name of the method. As \(m_{t}\) and \(v_{t}\) are initialized as vectors of 0’s, the authors of Adam observe that they are biased towards zero, especially during the initial time steps, and especially when the decay rates are small. So they counteract these biases by computing bias-corrected first and second moment estimates.

Following

We are trying to calculate \(\mathbb{E}[m_{t}]\) ,

\[\begin{align*} \mathbb{E}[m_{t}] &=& \mathbb{E}\left[ (1 - \beta_{1})\sum_{i=1}^{t}\beta_{1}^{t-i}\cdot g_{i} \right] \\ &=& \mathbb{E}[g_{i}] \cdot (1 - \beta_{1})\sum_{i=1}^{t}\beta_{1}^{t-i}\cdot g_{i} + \zeta \\ &=& \mathbb{E}[g_{i}] \cdot (1 - \beta_{1}^{t}) + \zeta \end{align*}\]where \(\zeta\) can be kept small since the exponential decay rate \(\beta_{1}\) can (and should) be chosen such that the exponential moving average assigns small weights to gradients too far in the past.So the bias-corrected first and second moment estimates are given by:

\[\begin{align*} \hat{m}_{t} &=& \frac{m_{t}}{1 - \beta_{1}^{t}} \\ \hat{v}_{t} &=& \frac{v_{t}}{1 - \beta_{2}^{t}} \end{align*}\]Finally, using these to update the parameters just as we have seen in RMSprop, which yields the Adam update rule:

\[\begin{equation} \theta_{t+1} = \theta_{t} - \frac{\eta}{\sqrt{\hat{v}_{t} + \epsilon}}\odot \hat{m}_{t} \end{equation}\]The authors propose default values of 0.9 for \(\beta_{1}\), 0.999 for \(\beta_{2}\) and \(10^{-8}\) for \(\epsilon\).

Second-Order Methods

In contrast to first-order methods, second-order methods make use of second derivatives to improve optimization. The second-order derivatives methods provide better training trajectory across the local curvature of the error surface with the help of additional Hessian information. The use of Hessian matrices ease the fine-tuning of hyperparameter by adaptively switching the step size according to the different stages of learning.

Newton’s Method

Newton method is the main second-order derivatives method but received less popularity due to heavy computation and memory usage. It is an optimization scheme based on using a second-order Taylor series expansion to approximate \(J(\theta)\) near some point \(\theta_{0}\), ignoring derivatives of higher order:

\[\begin{equation} J(\theta) = J(\theta_0) + (\theta - \theta_0)^\top \nabla J(\theta_0) + \frac{1}{2} (\theta - \theta_0)^\top \mathbf{H}(J(\theta_0)) (\theta - \theta_0) + \mathcal{O}(|\theta - \theta_0|^3) \end{equation}\]where H is the Hessian of J with respect to \(\theta\) evaluated at \(\theta_{0}\). If we then solve for the critical point of this function, we obtain the Newton parameter update rule:

\[\begin{equation} \theta^* = \theta_0 - \mathbf{H}^{-1} \nabla J(\theta_0) \end{equation}\]For a locally quadratic function with a positive definite Hessian matrix, Newton’s method can directly jump to the minimum by rescaling the gradient by the inverse Hessian. If the objective function is convex but not quadratic, this update can be iterated to yield the training algorithm associated with Newton’s method. As long as the Hessian remains positive definite, Newton’s method can be applied iteratively even for non-quadratic surfaces. This requires a two-step iterative procedure of updating or computing the inverse Hessian and then updating the parameters according to the update rule.

In deep learning, the surface of the objective function is typically non-convex with many features, such as saddle points, that are problematic for Newton’s method. When the Hessian has both positive and negative eigenvalues, as near a saddle point, Newton’s method can cause updates to move in the wrong direction. To address this issue, the Hessian can be regularized, for example, by adding a constant \(\alpha\) along its diagonal. This leads to a regularized update that can help avoid the problem of wrong direction updates as long as the negative eigenvalues of the Hessian are still relatively close to zero.

\[\begin{equation} θ^{*} = θ_0 - [H(J(θ_0)) + \alpha I]^{-1} * ∇J(θ_0) \end{equation}\]Newton’s method is not well-suited for training large neural networks due to its significant computational burden. The number of elements in the Hessian matrix grows quadratically with the number of parameters, which for even small networks can be in the millions. Inverting a \(k × k\) matrix has a computational complexity of \(O(k^3)\). Additionally, the inverse Hessian must be computed at every iteration, which makes the method impractical for large networks. Therefore, Newton’s method can only be applied to small neural networks with a limited number of parameters.

Conjugate Gradients

Conjugate gradients is a method to efficiently avoid the calculation of the inverse Hessian by iteratively descending conjugate directions. The conjugate gradient method starts by computing the gradient of the loss function with respect to the model parameters, $\nabla J(\theta)$.

- Initialize the search direction $\mathbf{d}_0 = -\nabla J(\theta_0)$

- Calculate the step size $\alpha_t$ by line search, i.e., $\alpha_t = \operatorname*{argmin}_{\alpha \geq 0}J(\theta_t + \alpha d_t)$.

- Update the parameters by $\theta_{t+1} = \theta_t + \alpha_t d_t$.

- Calculate the gradient $\nabla_{\theta}J(\theta_{t+1})$.

- Calculate the conjugate direction $\beta_{t+1} = \frac{( \nabla J_{t+1} - \nabla J_{t} )^\top \nabla J_{t+1}}{\nabla J_{t}^\top \nabla J_{t}}$.

- Update the search direction by $d_{t+1} = -\nabla_{\theta}J(\theta_{t+1}) + \beta_{t+1} d_t$.

- Increment the iteration counter by $t=t+1$ and repeat from step 2 until convergence.

Conjugate gradient method ensures that the gradient along the previous search direction does not increase in magnitude on a quadratic surface, thus remaining at the minimum along the previous directions. However, in deep learning, since the objective is not necessarily quadratic, this property does not hold. Therefore, the nonlinear conjugate gradient algorithm includes occasional resets where the method is restarted with line search along the unaltered gradient.

BFGS

The BFGS (Broyden-Fletcher-Goldfarb-Shanno) method is an optimization algorithm used to solve unconstrained nonlinear optimization problems. It is based on the Quasi-Newton method, which updates an approximation of the inverse Hessian matrix (denoted by \(B\)) at each iteration. The algorithm maintains an approximation of the inverse Hessian, which is updated using the gradient information. The update rule for the inverse Hessian approximation is given by:

\[\begin{equation} B_{t+1} = B_t + \frac{\Delta B_t \Delta B_t^T}{\Delta B_t^T \Delta g_t} - \frac{B_t g_t g_t^T B_t}{g_t^T B_t g_t} \end{equation}\]where $\Delta B_t = B_{t+1} - B_t$ and $\Delta g_t = g_{t+1} - g_t$ are the changes in the inverse Hessian approximation and the gradient, respectively, between the current and the previous iteration.

The update rule for the parameter vector is given by:

\[\begin{equation} p_{t+1} = p_t - \alpha_t B_t^{-1} g_t \end{equation}\]where $p_t$ and $g_t$ are the parameter vector and the gradient vector, respectively, at iteration t, and $\alpha_t$ is a scalar that gives the step size.

BFGS is computationally efficient for optimizing high-dimensional functions and can handle non-linear optimization problems. However, it requires significant computational resources to store and update the inverse Hessian approximation matrix at each iteration, especially for large-scale deep learning models.

A comprehensive review of the different optimization algorithms can be found here.

The art of Regularization

A central problem in machine learning is how to make an algorithm that will perform well not just on the training data, but also on new inputs. The role of regularization is to modify a deep learning model to perform well with inputs outside the training dataset. Specifically, regularization focuses on reducing the test or generalization error without affecting the initial training error.

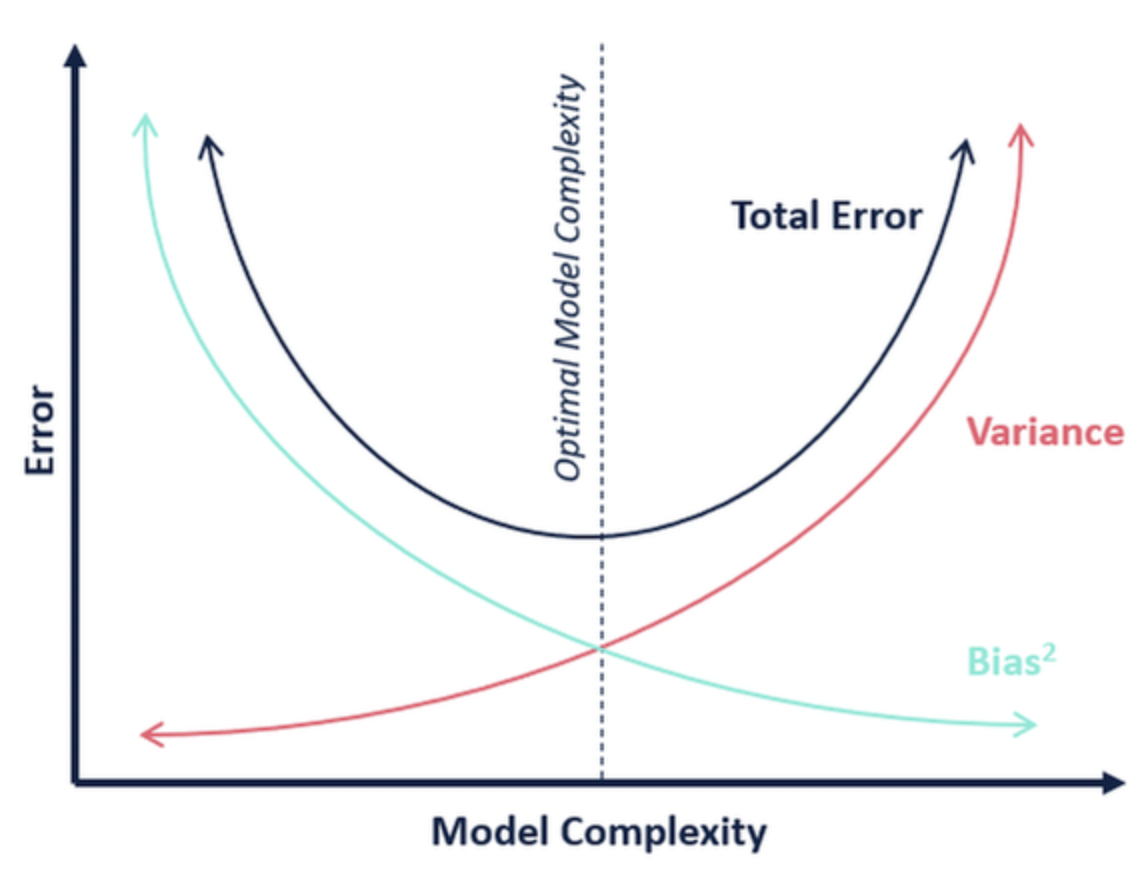

The Bias-Variance tradeoff

The bias-variance tradeoff is a fundamental concept in machine learning that refers to the tradeoff between the ability of a model to accurately capture the underlying relationships in the data (low bias) and its ability to generalize well to new, unseen data (low variance).

A model with high bias will underfit the data, meaning it is too simple and cannot accurately capture the patterns in the data. On the other hand, a model with high variance will overfit the data, meaning it is too complex and captures the noise in the data, which results in poor performance on new data. Regularization techniques optimize estimators by trying to mitigate this conflict of simultaneously minimizing these two sources of error.

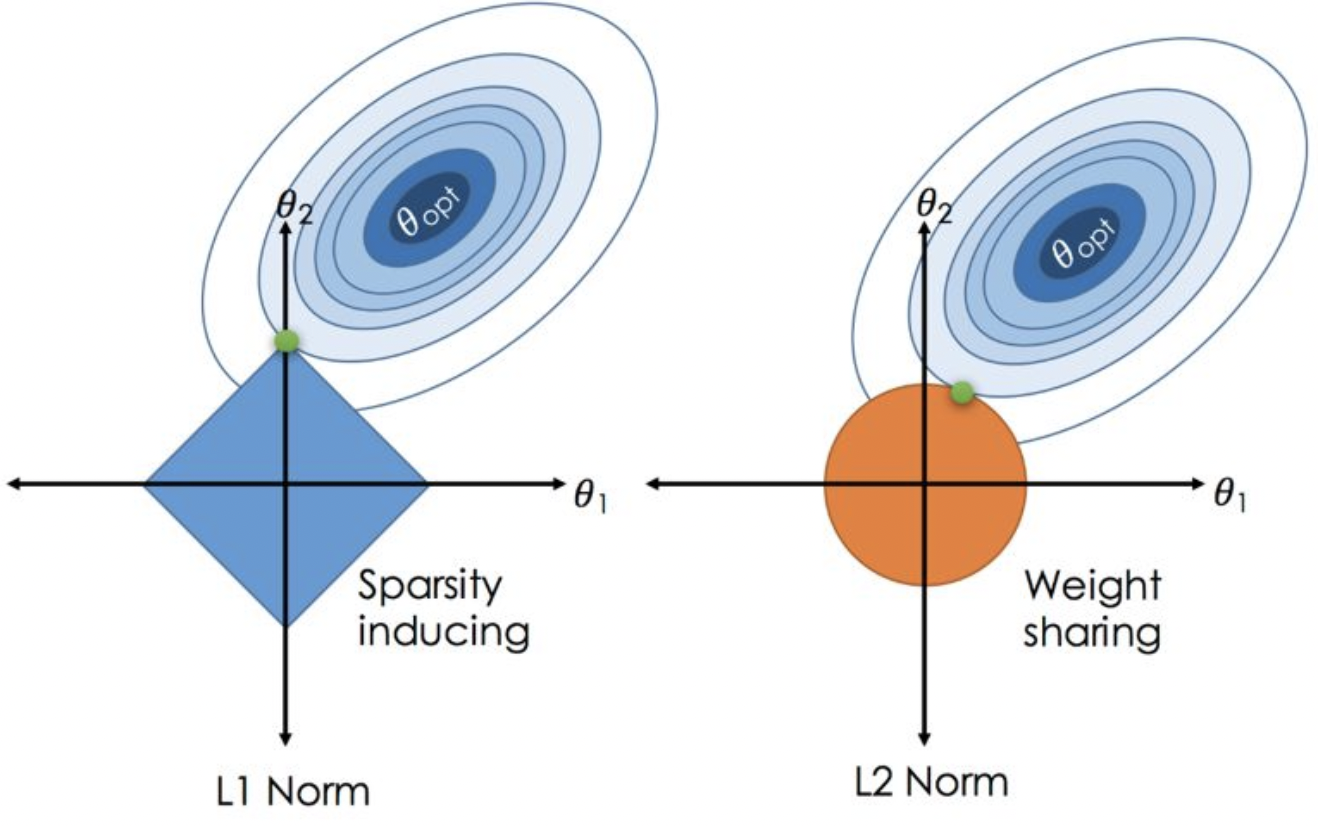

L$^{2}$ Regularization

the L$^{2}$ parameter norm penalty commonly known as weight decay. This regularization strategy drives the weights closer to the origin1 by adding a regularization term \(\Omega(\theta) = \frac{1}{2}\lvert\lvert\theta \rvert\rvert^2_2\) to the objective function. In other academic communities, L$^{2}$ regularization is also known as ridge regression or Tikhonov regularization.

The regularized cost function is given by:

\[\begin{equation} \tilde{J}(\theta) = J(\theta) + \frac{\lambda}{2} ||\theta||^2_2 \end{equation}\]Taking the derivative of the regularized cost function with respect to $\theta$ yields:

\[\begin{equation} \frac{\partial \tilde{J}(\theta)}{\partial \theta} = \frac{\partial J(\theta)}{\partial \theta} + \lambda \theta \end{equation}\]In matrix notation, this becomes:

\[\begin{equation} \nabla_{\theta} \tilde{J}(\theta) = \nabla_{\theta} J(\theta) + \lambda \theta \end{equation}\]Finally, updating the weights using gradient descent with L$^{2}$ regularization is done as follows:

\[\begin{equation} \theta \leftarrow \theta - \alpha \left(\nabla_{\theta} J(\theta) + \lambda \theta\right) \end{equation}\]This modification has the effect of shrinking the weight vector towards the origin, effectively reducing the magnitude of the weights and preventing overfitting.

In terms of the eigenvalues of the Hessian matrix, L$^{2}$ regularization can be shown to scale down the eigenvalues of the matrix, which in turn has the effect of reducing the sensitivity of the cost function to changes in the weight vector. L$^{2}$ regularization shrinks the weight values corresponding to the directions in the weight space where the eigenvalues of the Hessian matrix are small. This is because such directions do not significantly increase the gradient, and hence, do not contribute much to reducing the objective function. The regularization preserves the weight values corresponding to important directions in the weight space where the eigenvalues are large.

L$^{1}$ Regularization

L1 regularization, also known as Lasso regularization, is a method used to prevent overfitting in machine learning models. It adds a penalty term to the loss function that is proportional to the absolute value of the model’s weights given by \(\Omega(\theta) = \lvert\lvert\theta \rvert\rvert_1\). This penalty term encourages the model to have sparse weights by pushing many of them to zero. In other words, L1 regularization promotes feature selection by forcing the model to focus on a smaller subset of features.

The L$^{1}$ norm leads to sparse solutions shown in fig. 6. The cost function is minimized where its isosurface touches the regularization term’s isosurface. When they touch at an axis, a parameter is 0, resulting in sparsity. For L$^{2}$ norm, sparsity requires the minima without regularization to be on an axis. L$^{1}$ regularization can induce sparsity even when the minima is not on an axis due to its sharp edges.

Dropout

Dropout

During training, each neuron has a probability of being dropped out (typically set between 0.2 and 0.5). This means that during each iteration of training, some of the neurons will be randomly dropped out, and the model will be trained on a subset of the full network.

The idea behind dropout is that it forces the model to learn more robust features, as it cannot rely on any single neuron to always be present. It also prevents co-adaptation of neurons, where some neurons become dependent on the presence of others.

At test time, the full network is used, but each neuron’s output is scaled by the probability of it being present during training. This ensures that the output of the network at test time is similar to what it would have been if all the neurons were present during training. However, to ensure that the output of the network is similar to what it would have been during training, we scale the activations of the neurons by the probability of their presence during training (i.e., 1 - dropout probability).

Early Stopping

During training, the model is typically trained on a portion of the available data, known as the training set, and its performance is evaluated on a separate portion of the data, known as the validation set. As the model is trained, its performance on the validation set is monitored, and if the performance begins to worsen, the training process is stopped early, hence the name “early stopping.”

The intuition behind early stopping is that as the model becomes increasingly complex, it can begin to memorize the training set rather than learning general patterns, resulting in overfitting. By monitoring the performance on the validation set, early stopping can prevent this by stopping the training process before the model starts to overfit.

Early stopping is a very unobtrusive form of regularization, in that it requires almost no change in the underlying training procedure, the objective function, or the set of allowable parameter values. This means that it is easy to use early stopping without damaging the learning dynamics.

Other techniques

- Data augmentation: Data augmentation involves artificially increasing the size of the training dataset by applying random transformations to the input data, such as rotation, scaling, and cropping. This helps the model generalize better to new data and reduces the risk of overfitting.

- Batch normalization: Batch normalization involves normalizing the inputs to a layer to have zero mean and unit variance. This helps to stabilize the distribution of the inputs and prevent the model from overfitting to specific input distributions.

- DropConnect: DropConnect is a regularization technique that is similar to Dropout, but instead of dropping out individual neurons during training, it drops out individual weights (connections) between neurons. In other words, DropConnect randomly sets a fraction of the weights in the neural network to zero during training.

- Adversarial Training: Adversarial training involves training the model on adversarial examples, which are inputs that have been deliberately designed to cause the model to make errors. By exposing the model to these challenging examples during training, the model becomes more robust to variations in the input data.

- Label Smoothing: Label smoothing adds a small amount of noise to the labels of the training data. This can help to prevent the model from becoming overconfident in its predictions and can improve the generalization performance of the model.

- Curriculum Learning: Curriculum learning is a procedure of training the model on examples in a particular order, starting with simple examples and gradually increasing the difficulty. This can help the model to learn more robust features and prevent overfitting to the easy examples.

- Knowledge Distillation: In knowledge distillation, a smaller, more efficient model is trained to mimic the behavior of a larger, more complex model. By transferring the knowledge from the larger model to the smaller model, the smaller model can achieve similar performance with fewer parameters.

This would be a good place to stop. In this post, I summarized the optimization and regularization techniques commonly used in deep learning. Check chapters 7 and 8 of the deep learning