Deep Learning - the basics

The fundamentals of deep learning... in some detail.

- The ‘Deep’ in deep learning.

- The ‘learning’ in deep learning.

- Loss functions

- The mapping function (\(f_{w}\))

- Gradient Descent

- Learning by example: The XOR function

The ‘Deep’ in deep learning.

Deep learning is a specific subfield of machine learning: a new take on learning representations from data that puts an emphasis on learning successive layers of increasingly meaningful representations. The deep in deep learning stands for this idea of successive layers of representations. How many layers contribute to a model of the data is called the depth of the model. In deep learning, the layered representations are learned via models called neural networks. The phrase neural network has its roots in neurobiology. While some of the fundamental principles in deep learning were influenced by our knowledge of the brain, deep learning models are not exact replicas of the brain. There is no substantial proof that the learning mechanisms utilized in modern deep learning models are implemented in the brain.

Deep learning can be illustrated by a toddler’s process of learning the concept of a dog. The toddler points at various objects, says the word “dog,” and the parent confirms or denies if it is a dog. Through this process, the toddler builds a hierarchy of abstraction and becomes more familiar with the characteristics that define a dog. Each level of abstraction is created with knowledge gained from the previous level.

The ‘learning’ in deep learning.

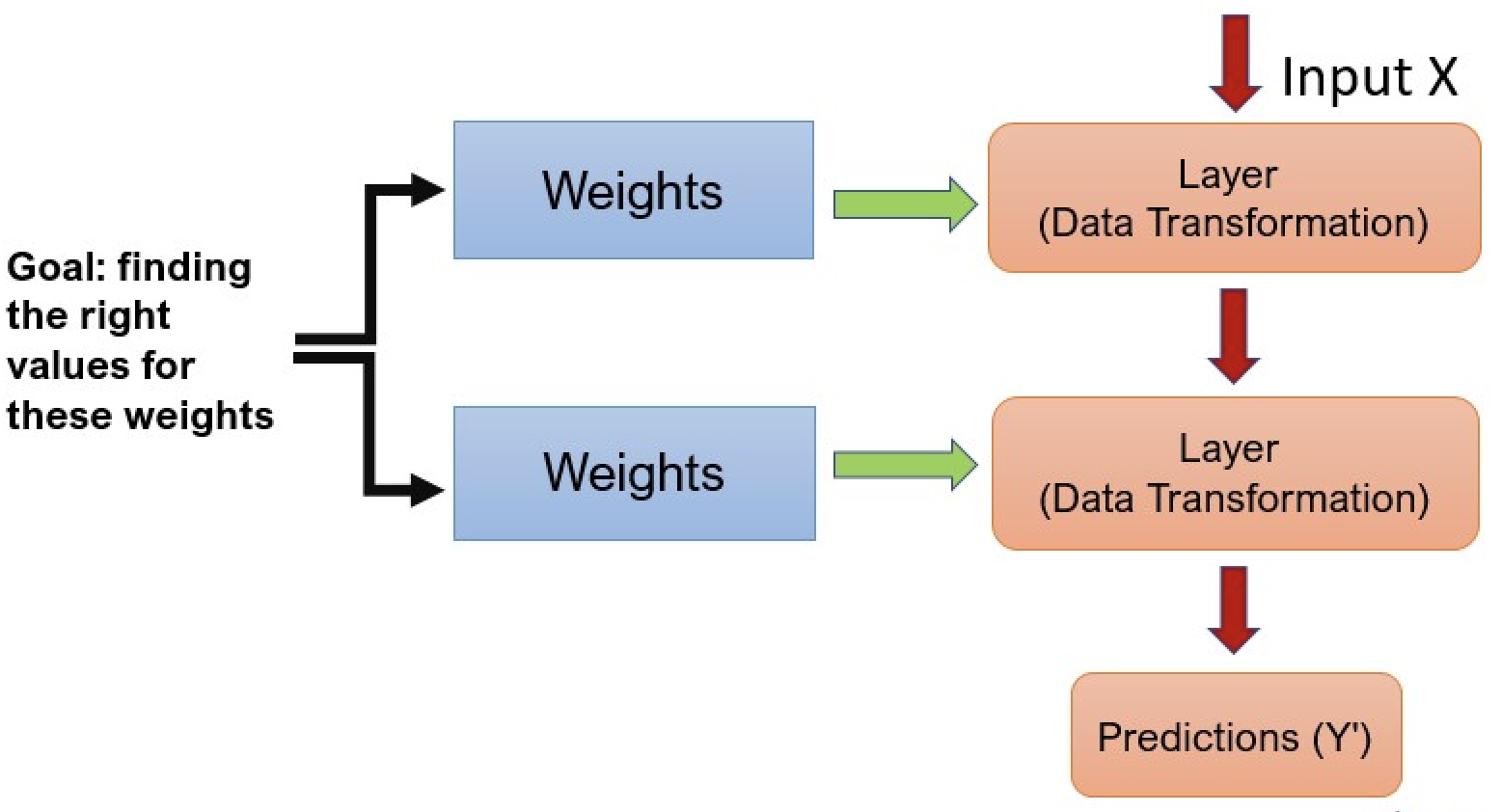

As nicely presented by Francois Chollet in his famous deep learning book,

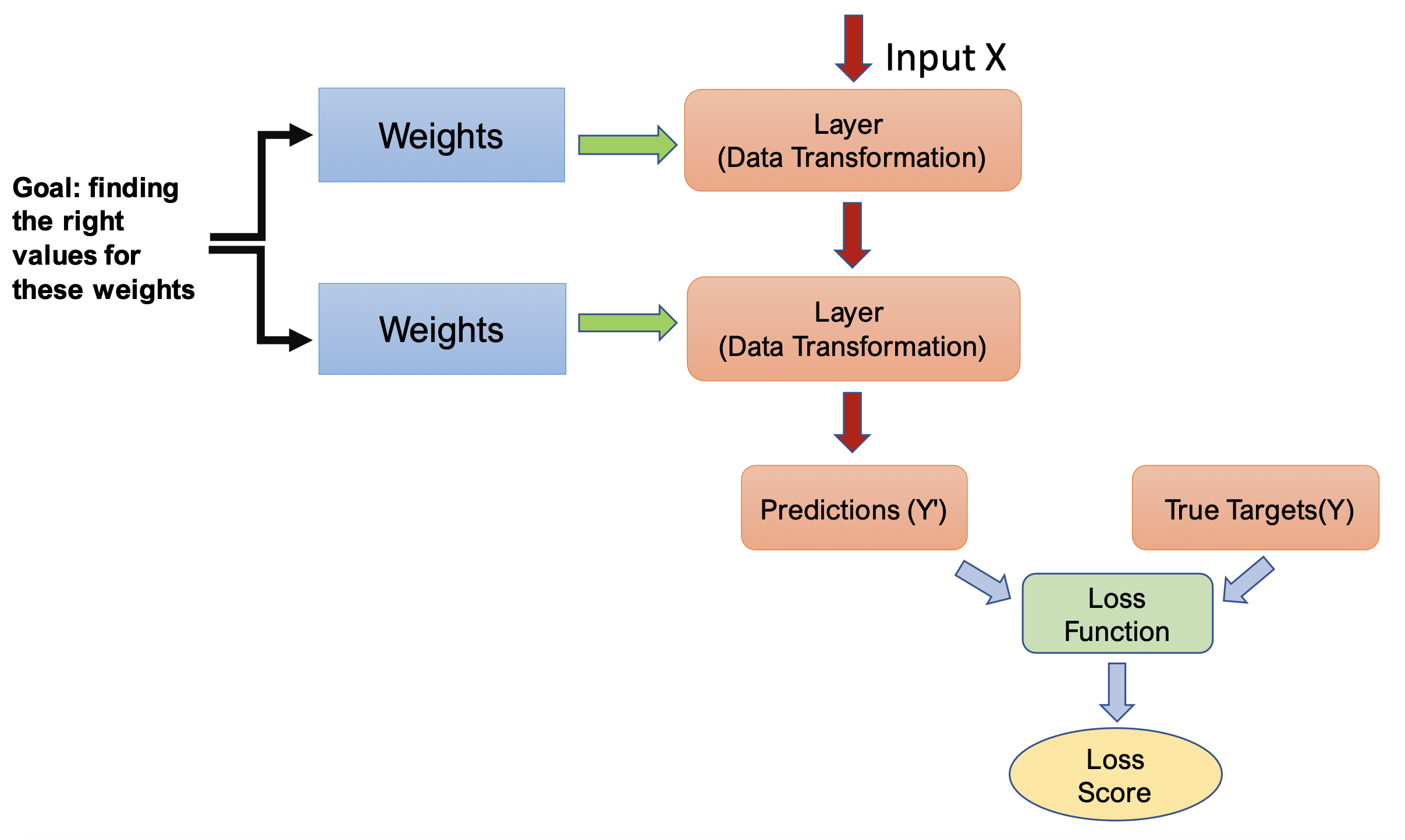

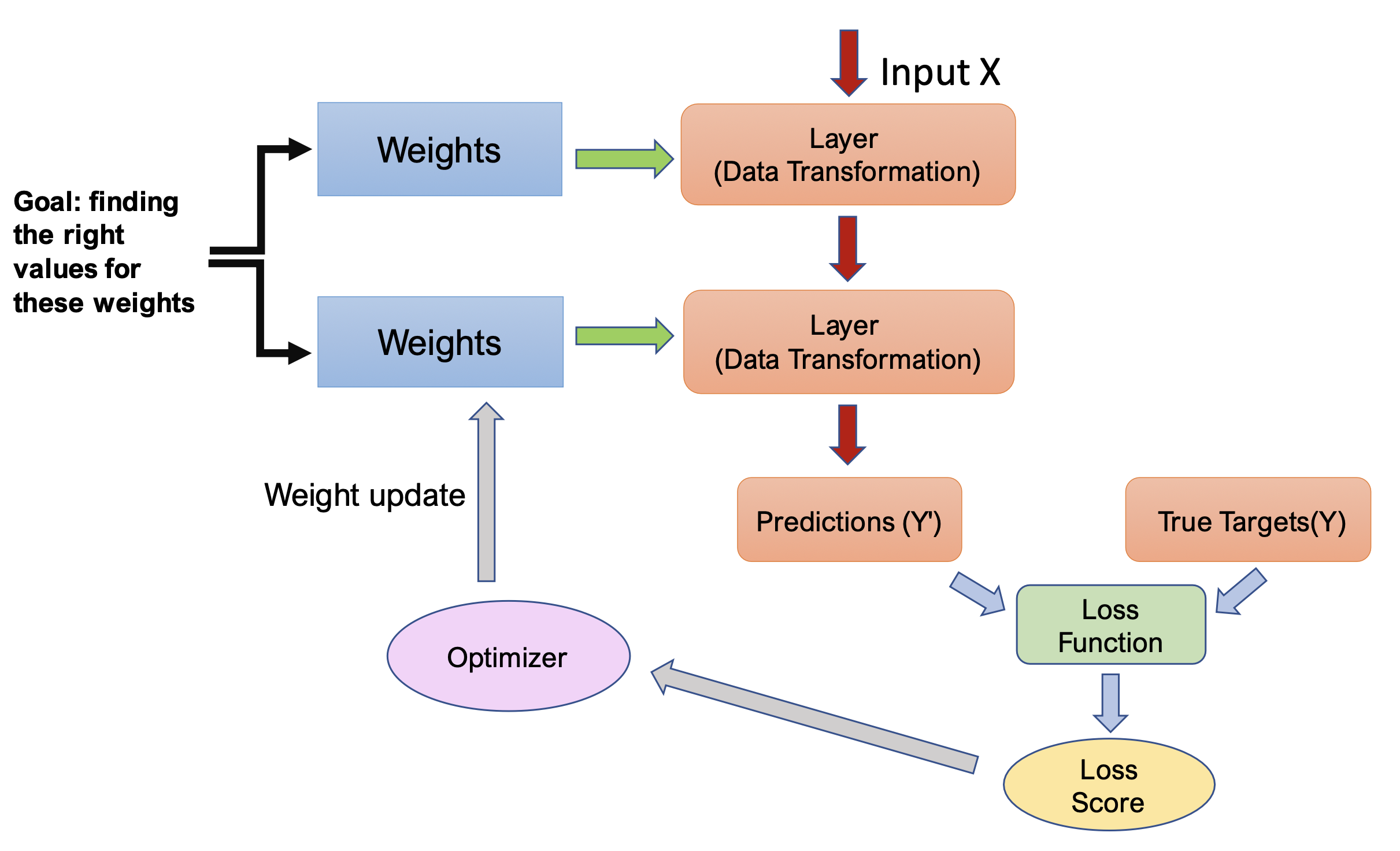

To control a neural network’s output, we need a loss function that measures the distance between the predicted output and the expected output. It computes a score based on how well the network performed on a specific example (see figure 2).

Deep learning’s fundamental technique is to use the loss score as a feedback signal to slightly adjust the weights towards lowering the loss score for the current example. The optimizer performs this adjustment using the Backpropagation algorithm, which is the central algorithm in deep learning.

In the beginning, the network weights are random, and the output is far from ideal, resulting in a high loss score. As the network processes examples, the weights are adjusted slightly in the correct direction, decreasing the loss score. This iterative process, called the training loop, is repeated until the loss function is minimized, typically over tens of iterations on thousands of examples. A network with minimal loss has outputs that closely match the targets and is considered trained.

How learning differs from pure optimization.

Traditional optimization algorithms differ from optimization algorithms used for training deep neural networks (or other machine learning algorithms for that matter). In most scenarios, we care about a performance measure P that is defined on the test set. This measure is usually intractable. We optimize P indirectly by reducing a different cost function J(θ) in hope that doing so will improve P. This is in contrast to pure optimization where minimizing J(θ) is the goal itself

Typically, the loss function or the expected generalization error can be written as an average over the training dataset,

\[\label{lec1:ERM} \mathbb{E}_{(x,y) \sim \hat{P}_{X,Y}^{(N)}} [l(f(X),Y)]\]where l is the per-example loss function, $ f(X) $ is the predicted output when the input is X, and \(\hat{P}_{X,Y}\) is the emperical distribution. Y is the target output for the supervised case. It is usually preferred to minimize the corresponding loss function where the expectation is calculated across the data-generating distribution rather than just over the training set.

Loss functions

The fundamental goal of machine learning is to reduce the cost function

For the ERM problem, there are many choices for the loss function depending on the type of problem being solved.

MSE Loss

In the regression problem, where \(f_w(x)\in \mathbb{R}\), a good option is the mean squared error (MSE) or quadratic loss.

\begin{equation}\label{lec1:1} L_{MSE}(f_w(x), y) := \frac{1}{2}||f_w(x)-y||^2 \end{equation}

The quadratic loss is differentiable and a smooth function. Also, the derivative is linear, making analysis very easy.

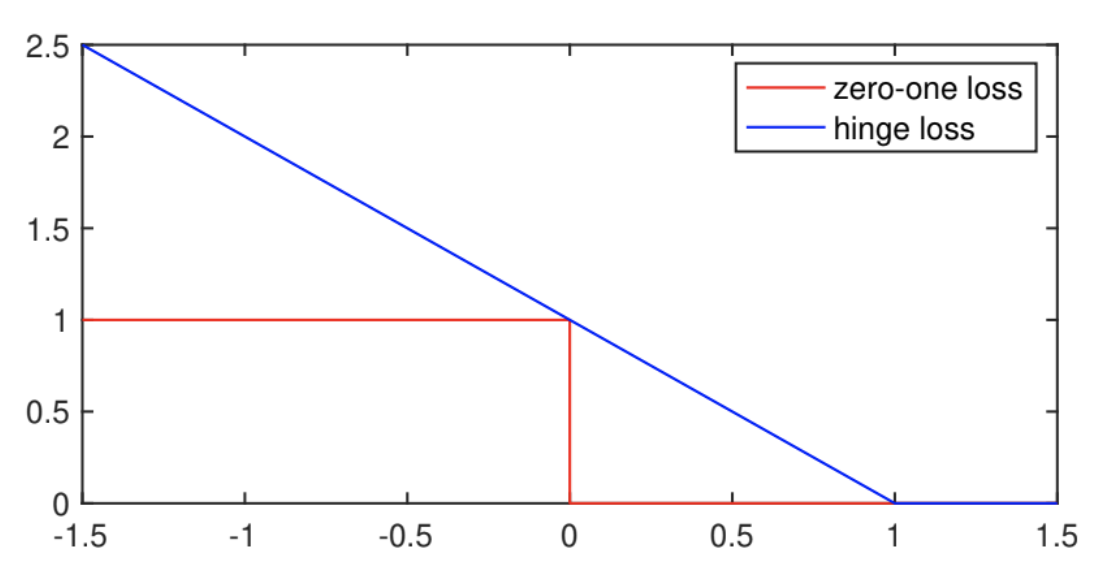

0-1 Loss

For a classification problem, \(f_w(x)\in \mathbb{R}\) but the labels can be in a binary field, \(y\in \{0,1\}\). So the real numbers \(f_w(x)\) need to be converted to binary labels, we can redefine them by:

\[\hat{y}= \begin{cases} 0 & f_w(x)\geq 0 \\ 1 & otherwise \end{cases}\]The zero-one loss function is not as convenient as the quadratic loss, since the zero-one loss is a non-convex function. Also, the derivative of the zero-one loss is mostly zero everywhere, meaning the gradient does not provide information to update the model parameters. In such situations, one typically optimizes a surrogate loss function instead, for example, we can use an approximation of the zero-one loss, called the hinge loss.

Hinge Loss

The hinge loss is formally defined as

\begin{equation}\label{lec1:2} L_{hinge}(f_w(x), y) := max(0, 1-yf_w(x)) \end{equation}

When $f_w(x)y$ is greater than one, the hinge loss is zero. Otherwise, it is a linear function w.r.t $f_w(x)y$. If you are familiar with support vector machines, the hinge loss is commonly used as a margin-maximization function. When a data point lies on the wrong side of a SVM boundary, the loss linearly increases as that point gets further away from the correct label. The hinge loss effectively maximizes the margin in SVM, creating a boundary that correctly classifies the binary labels (more on SVM for some another day).

Cross-entropy loss

Another popular loss for classification problems is the cross-entropy loss. Cross-entropy loss is based on maximum likelihood optimization, which is a method for estimating parameters of a probability distribution by maximizing a likelihood function. Cross-entropy loss measures the performance of a classification model whose output is a probability value between 0 and 1. So we need to convert $f_w(x)$ to probabilities, and we do this by using the sigmoid function. The sigmoid function maps real numbers to a number in (0,1), by

\begin{equation}\label{lec1:3} g(z) = \frac{1}{1+e^{-z}} \end{equation}

Now that all the outputs are probabilities, any probability above $\frac{1}{2}$, i.e. positive $f_w(x)$, a positive label will be predicted. Otherwise, a negative label will be predicted.

\[\begin{cases} P(y=1|x;w) = g(f_w(x)) \\ P(y=0|x;w) = 1-g(f_w(x)) \end{cases}\]The probability of the predicted label can be further simplified to

\begin{equation}\label{lec1:4} P(y|x;w) = g(f_w(x))^y (1-g(f_w(x)))^{1-y} \end{equation}

So our maximum likelihood optimization is the maximization over the model parameters of the likelihood of observing this data set from this model. Assuming independence among samples drawn from this model, the probability of observing this data set is the product of observing individual samples from this model

\begin{equation}\label{lec1:5} \max_{w} \prod_{i=1}^{N} P(y_{i}|x_{i};w) \end{equation}

To get rid of the product, since log is a monotonically increasing function, we equivalently get

\begin{equation}\label{lec1:6} \max_{w} \sum_{i=1}^N log P(y_i|x_i;w) \end{equation}

We have a formula for $P(y_i|x_i;w)$ defined in (4), so we now have, \begin{equation}\label{lec1:7} \min_{w} -\sum_{i=1}^N y \log (g(f_w(x)))+(1-y)\log(1-g(f_w(x)) \end{equation} This is the definition of the cross-entropy loss function.

This was for binary classification, where the sigmoid function is used to convert the real numbers to probabilities. In the multi-class case, the softmax function is commonly used. The softmax function is defined as, \begin{equation}\label{lec1:8} S(f_w(x_i)) = \frac{e^{f_w(x_i)}}{\sum_j e^{f_w(x_j)}} \end{equation} The softmax function takes the exponential of each class and divides that by the sum of the exponential of the previous classes, this effectively ensures probabilities summing to one. Since softmax is converting the real numbers to probabilities for multiple classes, it intuitively makes sense why the probabilities for each class for a sample should sum to one. In the case of multiple classes, the cross-entropy loss function calculates a separate loss for each class label per observation and sums the result. The new cross-entropy loss function can be defined as, \begin{equation}\label{lec1:9} -\sum_{i=1}^N y_{i} log (S(f_w(x_i)))) \end{equation}

Equivalently, \begin{equation}\label{lec1:10} -\sum_{i=1}^N y_{i} log (\frac{e^{f_w(x_i)}}{\sum_j e^{f_w(x_j)}}) \end{equation}

The mapping function (\(f_{w}\))

All deep learning models try to learn a mapping from an input to an output. This mapping (\(f_w\)) can be linear or non-linear depending on how the model is constructed.

For the case where $f_w$ is linear it can be written as: \begin{equation}\label{lec1:affine_eq} f_w(x) = w \cdot x+b \end{equation}

Here \(w\) is the weight to be learnt and b is the bias term. Having a linear mapping has it pros and cons. The pro being linear mapping with convex loss function like least squares ensures a global minimum and convergence, but it has limitations of what it can represent.

Non-linearity

Given linear transforms cannot represent complex decision surfaces, we can use an activation function (\(g(.)\)) which is applied over the output of a neuron to bring in non-linearity. Basically the transformed output can be written as:

\begin{equation}\label{lec1:activation} g(f_w(x)) = g(w \cdot x + b) \end{equation}

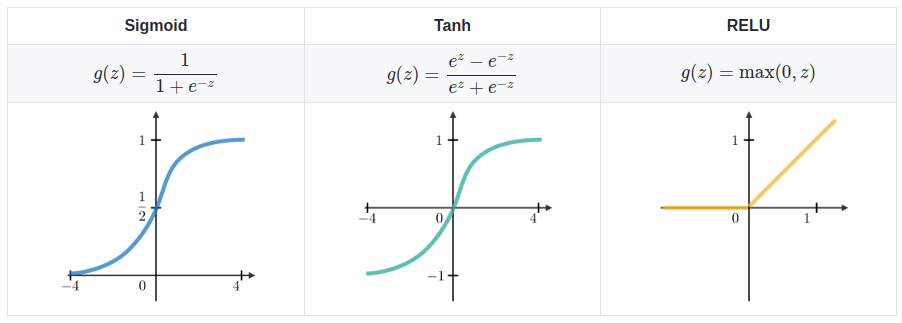

Adding such a function helps the network learn more complex surfaces and better fit to the data distribution. Some commonly used non-linearities are:

- Logistic/Sigmoid: \(g(z) = \frac{1}{1+e^{-z}}\)

- Tanh: \(g(z) = \frac{e^x - e^{-x}}{e^x + e^{-x}}\)

- ReLU: \(g(z) =max(0,z)\)

In addition to adding non-linearities through activation functions adding more layers also brings in non-linearity in the parameters.

Gradient Descent

Gradient descent (GD) is an iterative first-order optimisation algorithm used to find a local minimum/maximum of a given function. Gradient descent is best used when the parameters cannot be calculated analytically (e.g. using linear algebra) and must be searched for by an optimization algorithm.

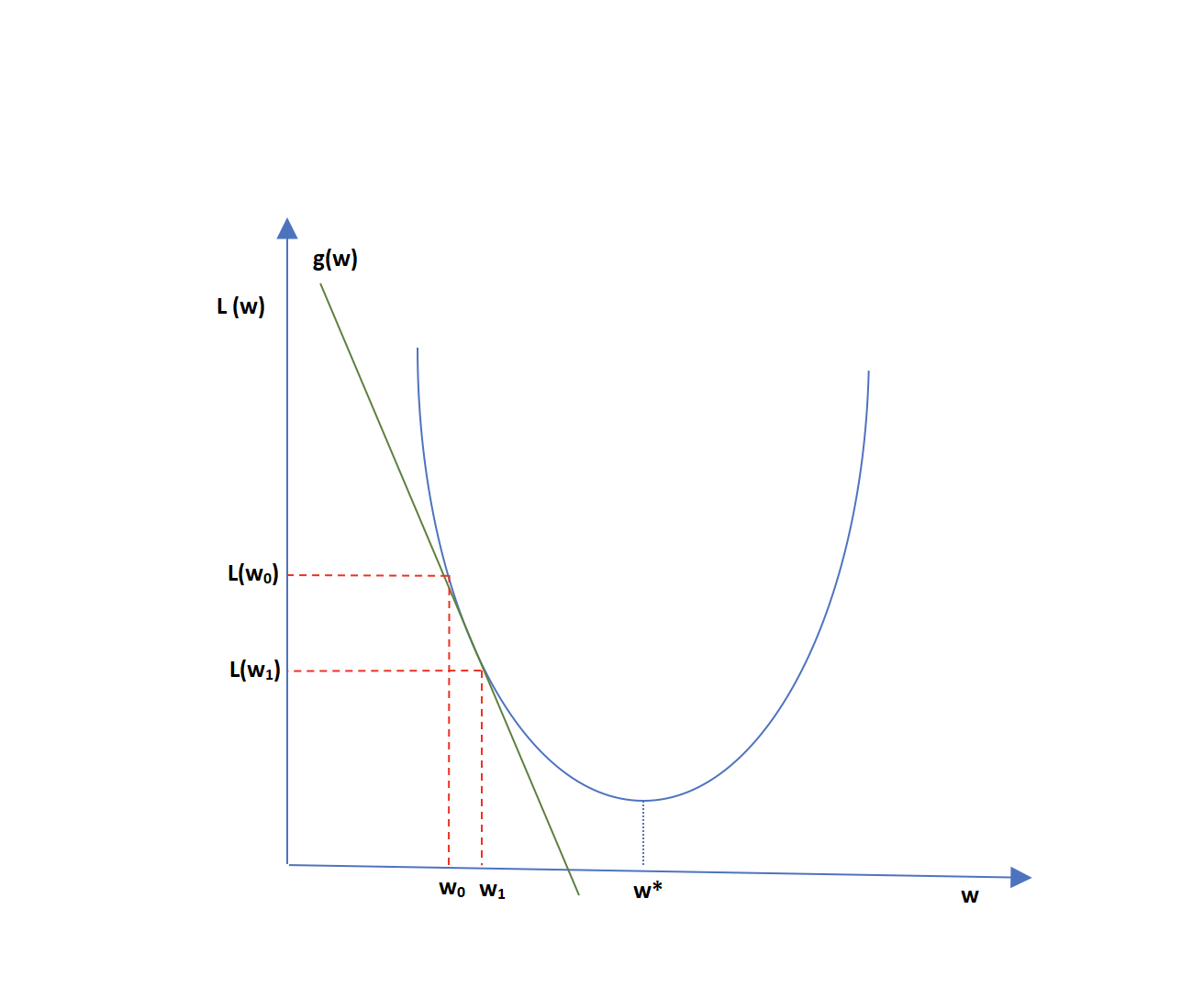

The gradient Descent algorithm takes steps proportional to the negative of the function’s gradient to compute the next most optimal point from the current point. If \(L(w)\) is convex, we can use gradient descent algorithm to reach the optimal value of \(w^{*}\). Let us assume we start with parameter set $w_0$ as shown in figure 6. The computed cost function at \(w_0\) will be \(L(w_0)\). Now the tangent line represented as \(g(w)\) is slope or gradient at point (\(w_0\), \(L(w_0)\)). Moving in the negative direction of this slope, we get \(w_1\) and \(L(w_1)\), where \(L(w_1) \le L(w_0)\). Moving similarly, computing gradient at \(w_1\) and then moving in the negative direction can lead us close to \(w^*\), which minimizes \(L(w)\). This is essentially the concept of gradient descent.

At any point t, the next set of values for parameter set can be computed by

\begin{equation} \label{lec1:eq:GD} w_{t+1} : = w_{t} - \eta \nabla_{ \boldsymbol { w } } L ( \boldsymbol { w }_{t} ) \end{equation}

where \(\eta\) is the learning rate or step size. Typically, Full Batch Gradient Descent involves gradient computation over all data samples.

\begin{equation} \nabla_{ \boldsymbol{w}} L(\textbf{w}_ {t}) = \frac{1}{N} \sum_{i=1}^{N}\nabla_{ \boldsymbol{w}} L(f_{w}(x_i),y_i) \end{equation} However, with large number of data samples, the complexity of gradient descent can be very high, making gradient update expensive in terms of computation.

Stochastic Gradient Descent

Stochastic Gradient descent uniformly picks a random sample to compute the next gradient instead of using all samples. Stochastic Gradient Descent (SGD) uses batch size of 1, in comparison to Batch Gradient Descent using the entire training set. The parameter update can be computed as:

\begin{equation} w_{t+1} : = w_{t} - \eta \frac{1}{|B|}\sum_{i \in B}\nabla_{ \boldsymbol{w}} L(f_{w}(x_i),y_i) \end{equation}

Another version of SGD is where \(1\le p \le m\), that is picking uniformly random \(p\) samples from total samples \(m\) and computing gradient term. This version is known as Mini-batch Gradient Descent.

SGD adds randomization to the vanilla gradient descent, which helps in overcoming saddle points and local minima at times. It has also been seen that SGD works well for non-convex optimizations.

Moreover, we have considered \(\eta\) to be constant for each iteration in the above equations, whereas there can be variations in learning rate, commonly which decay the value of \(\eta\) as iterations increase. The learning rate can be varied as the training proceeds by using adaptive learning rate regimes.

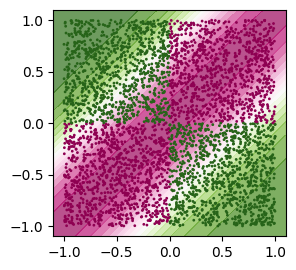

Learning by example: The XOR function

To make the idea of a neural network more concrete, we begin with an example of a fully functioning feedforward network on a straightforward task: learning the XOR function. The XOR function (“exclusive or”) is an operation on two binary values, \(x_{1}\) and \(x_{2}\). When precisely one of these binary values equals 1, the XOR function returns 1. Otherwise, it returns 0.

We want our network to perform correctly on the four points \(\mathbb{X} = \{[0,0]^{T}, [0,1]^{T}, [1,0]^{T}, [1,1]^{T}\}\). Now a neural network with no hidden layers (also called a single layer perceptron) cannot learn the XOR function. The reason is simple, the classes in XOR are not linearly separable. You cannot draw a straight line to separate the points (0,0),(1,1) from the points (0,1),(1,0).

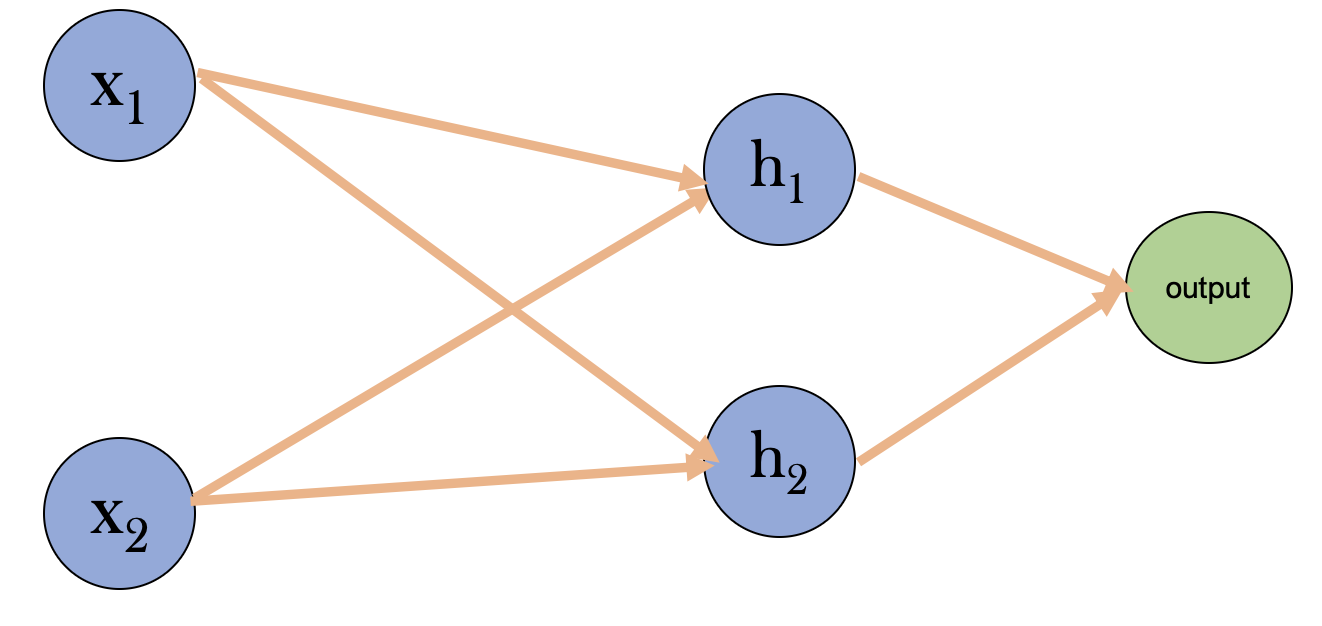

The forward pass

So let’s choose a neural network with a single hidden layer with two neurons. In that case, our input vector is 2-dimensional, and the output is 0 or 1. The weight matrix between the input and the hidden layer is denoted by \(w_{1}\) and has a dimension of \((2,2)\). In contrast, the weights between the hidden layer and the output (denoted by \(w_{1}\)) have dimensions \((1,2)\). In general, the dimension of the weight matrix for any arbitrary hidden layer is given by \((n_{l},n_{l-1})\) where \(n_{l}\) and \(n_{l-1}\) are the number of neurons in the current and the previous layer respectively.

During the forward pass, the input is passed through the network’s layers, and the output is computed based on the weights and biases of each layer. For our chosen network, the following steps include the forward pass:

- The input to the hidden layer: \(z_{1} = w_{1}\cdot x + b_{1}\)

- The activation of the hidden layer (using ReLU activation function): \(a_{1} = max(0, z_{1})\)

- The input to the output layer: \(z_{2} = w_{2}\cdot a_{1} + b_{2}\)

- The activation of the output layer (using sigmoid activation function): \(a_{2} = \sigma(z_{2})\)

The output \(a_{2}\) is fed to the cost function to calculate the error. \(J = - \frac{1}{N} \sum\limits_{i = 0}^{N} \large\left(\small y^{(i)}\log\left(a_{2}^{(i)}\right) + (1-y^{(i)})\log\left(1- a_{2}^{(i)}\right) \large \right) \small \tag{6}\)

The crucial backpropagation

The basic idea of backpropagation is to update the weights of the neural network by computing the gradient of the cost function with respect to the weights. The gradient is calculated using the chain rule of calculus and is propagated backward through the network to update the weights of each layer. For our case, the calculation proceeds as follows:

- \(\frac{\partial J}{\partial w_{2}}\) = \(\frac{\partial J}{\partial a_{2}} \frac{\partial a_{2}}{\partial z_{2}} \frac{\partial z_{2}}{\partial w_{2}}\) = \(\frac{(a_{2} - Y)}{a_{2}(1 - a_{2})} a_{2}(1 - a_{2}) a_{1}^{T} = ((a_{2} - Y)a_{1}^T)/ N\)

- \(\frac{\partial J}{\partial w_{1}}\) = \(\frac{\partial J}{\partial a_{2}} \frac{\partial a_{2}}{\partial z_{2}} \frac{\partial z_{2}}{\partial a_{1}} \frac{\partial a_{1}}{\partial z_{1}} \frac{\partial z_{1}}{\partial w_{1}}\) = \((w_{2}^{T}(a_{2} - Y) * max(0,1) x^{T}) /N\)

- \(\frac{\partial J}{\partial b_{2}}\) = \(\frac{\partial J}{\partial a_{2}} \frac{\partial a_{2}}{\partial z_{2}} \frac{\partial z_{2}}{\partial b_{2}}\) = \(\frac{(a_{2} - Y)}{a_{2}(1 - a_{2})} a_{2}(1 - a_{2}) = (a_{2} - Y)/ N\)

- \(\frac{\partial J}{\partial b_{1}}\) = \((w_{2}^{T}(a_{2} - Y) * max(0,1)) / N\)

While calculating the above gradients, I skipped many steps in calculating the partial derivatives. For example, the derivative of the sigmoid function with respect to \(z\) is given by \(\sigma'(z) = \sigma(z)(1 - \sigma(z))\). I would advise going through the derivation at least once, as the backprop is generally considered the trickiest part of the entire algorithm. The good thing, though, is that almost all the deep learning frameworks take care of the backpropagation step internally!

Updating the weights

Once we habe the gradient of the cost function with respect to the weight, we update the weights and biases following gradient descent algorithm.

\begin{equation} w = w - \eta \frac{\partial J}{\partial w} \end{equation}

At each iteration

Does it work?

Let’s try to implement the neural network with a single hidden layer to model the XOR function. As we advance, I will use the Pytorch deep learning framework

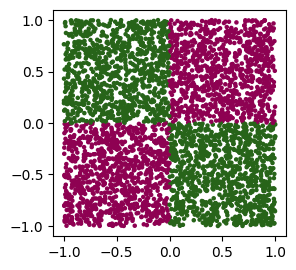

The first step in training a neural network is to get the data. The data’s quality and quantity are often the most crucial aspects of running an ML algorithm (as we will discover later). However, we know what our input data should look like for this specific case, and we can generate it accordingly.

X = np.random.uniform(-1, 1, size=(4000, 2))

y = ((X[:,0] >= 0) ^ (X[:,1] >= 0)).astype(float)I have generated pairs of random numbers between -1 and 1 and separated them into four quadrants. Each data point represents one of the four cases in the XOR function. The corresponding output labels are either 0 or 1. Next, we define the neural network.

# Define the neural network

class XOR_Net(nn.Module):

def __init__(self, input_dim, hidden_dim, output_dim):

super(XOR_Net, self).__init__()

self.fc1 = nn.Linear(input_dim, hidden_dim)

self.sigmoid = nn.Sigmoid()

self.fc2 = nn.Linear(hidden_dim, output_dim)

def forward(self, x):

out = self.fc1(x)

out = self.sigmoid(out)

out = self.fc2(out)

return outIn PyTorch, the forward() function is a method of the neural network class that defines the forward pass computation of the network. It takes an input tensor as an argument and computes the output of the network by applying a series of transformations to the input. Finally, we can write a training loop to train the network and then evaluate on a separate test data.

# Define the cross-entropy loss function and Stocastic Gradient Descent (SGD) optimizer

criterion = nn.BCEWithLogitsLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=0.15)

# Train the neural network

for epoch in range(10000):

# Forward pass

outputs = model(torch.from_numpy(X_train))

loss = criterion(outputs, torch.unsqueeze(y_train,1))

# backpropogate through the loss gradiants

loss.backward()

# update model weights

optimizer.step()

# remove current gradients for next iteration

optimizer.zero_grad()

if (epoch+1) % 500 == 0:

print('Epoch [{}/{}], Loss: {:.4f}'.format(epoch+1, 10000, loss.item()))# Making predictions on the test data

assert isinstance(model, nn.Module)

np.random.seed(42)

X_test = np.random.uniform(-1, 1, size=(4000, 2))

y_test = ((X_test[:,0] >= 0) ^ (X_test[:,1] >= 0)).astype(int)

prediction = model(

torch.tensor(X_test, dtype=torch.float32).to(device)

).cpu().detach().numpy().squeeze()

accuracy = ((prediction > 0.).astype(int) == y_test).mean()

print("Accuracy:", accuracy)

The accuracy of the test data is about 83% which is good for a single hidden layer neural network. You can quickly check that a) increasing the number of neurons in the hidden layer and b) increasing the number of hidden layers increase the model’s performance up to a certain point. These numbers are the model’s hyperparameters, and tuning them to improve overall performance is known as tuning. Hyperparameter tuning is a crucial part of the deep-learning models, which I will discuss in detail in my next post (hopefully sometime early next week!!)

I have attached a couple of jupyter notebooks that cover the basics of numpy and pytorch, along with a few examples of slightly bigger deep-learning models and different aspects of training them. Both notebooks were part of an assignment for a summer school I attended in the summer of 2020. GIve it a try!.

Finally, anyone wanting to get into the foundations of deep learning should check out these lectures from the University of Maryland